One Platform. One Gateway. Every Request Governed.

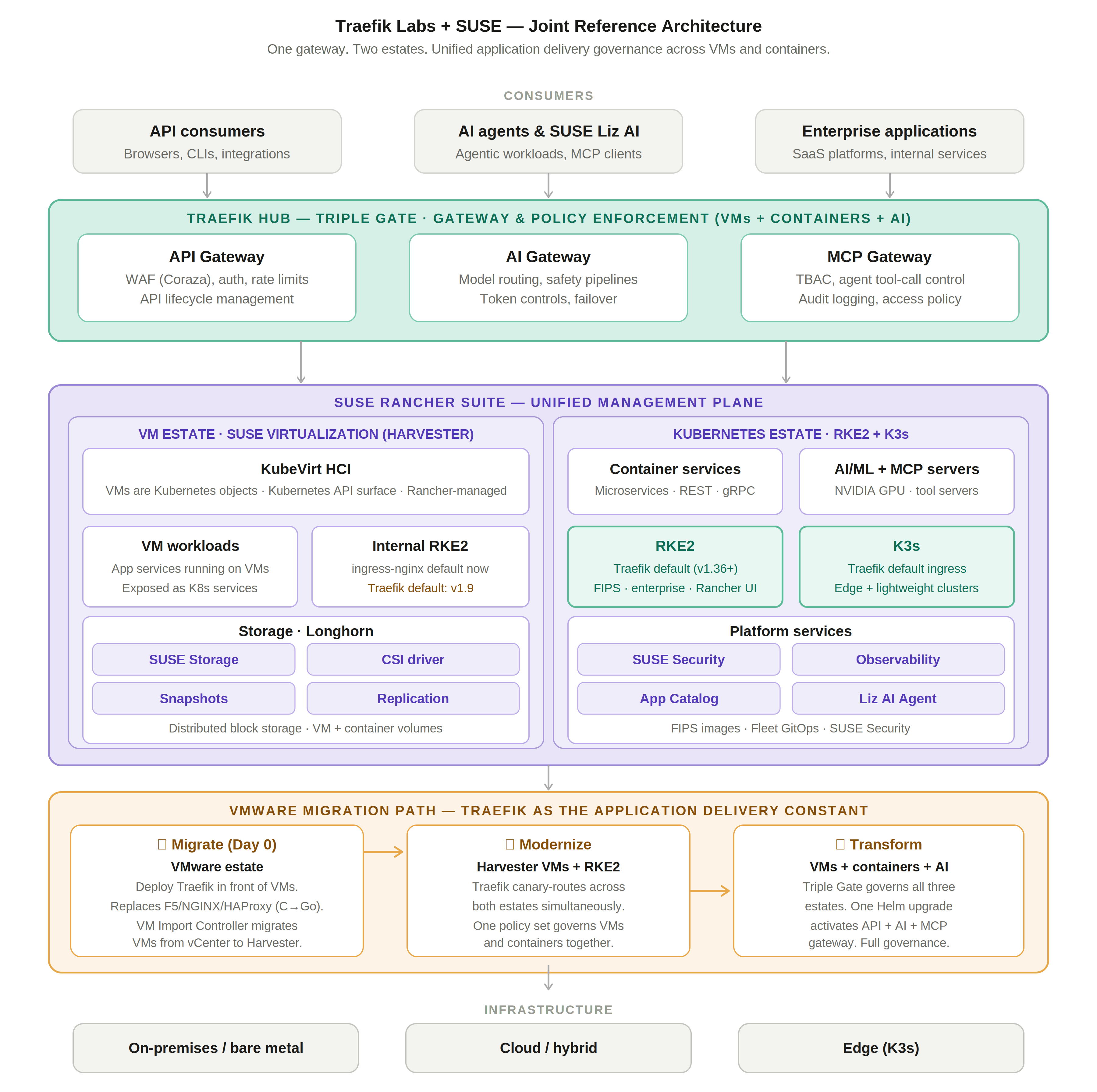

How Traefik Labs and SUSE power unified application delivery governance across VM and container estates, covering VMware migration, Kubernetes ingress, and AI agent control.

Three waves are hitting enterprise infrastructure simultaneously, and they are converging on the same answer.

The first wave is VMware displacement. Two years after Broadcom completed its acquisition of VMware, the predicted mass exodus has not materialized, but a measured, sustained unwind is well underway. CloudBolt's January 2026 survey of 302 enterprise IT decision-makers found that 86 percent of organizations are actively reducing their VMware footprint, while 88 percent report that anticipated future price increases are already shaping infrastructure decisions. Most organizations saw price increases of 25-49%; outlier cases have been dramatically higher. The organizations evaluating SUSE Virtualization, Nutanix, and OpenShift as destinations are not making minor adjustments. They are re-platforming their entire estate, without realizing that infrastructure migration and application delivery migration are two different problems.

The second wave is Kubernetes networking consolidation. The Kubernetes community retired ingress-nginx in March 2026, ending all maintenance, bug fixes, and security patches for one of the most widely deployed components in production infrastructure. SUSE formalized Traefik as the default ingress controller for RKE2 starting with v1.36, extending what K3s has delivered for years. IBM Cloud, Nutanix, OVHcloud, and TIBCO each made the same choice independently.

The third wave is enterprise AI governance. Organizations are deploying AI inference workloads, autonomous agents, and MCP-connected tool pipelines into production. API gateway, AI gateway, and MCP governance are absent across every segment of the enterprise infrastructure landscape today. They have to be added, and the architecture of how they are added determines how well-governed, auditable, and portable the result is.

The joint architecture described in this post addresses all three waves with a single platform and a single upgrade path.

The Architecture at a Glance

Two Estates, One Management Plane

A common misconception is that SUSE Rancher Prime is a Kubernetes-only management platform and SUSE Virtualization is a VM-only platform. Neither is correct, and the distinction matters significantly for how Traefik is positioned in this architecture.

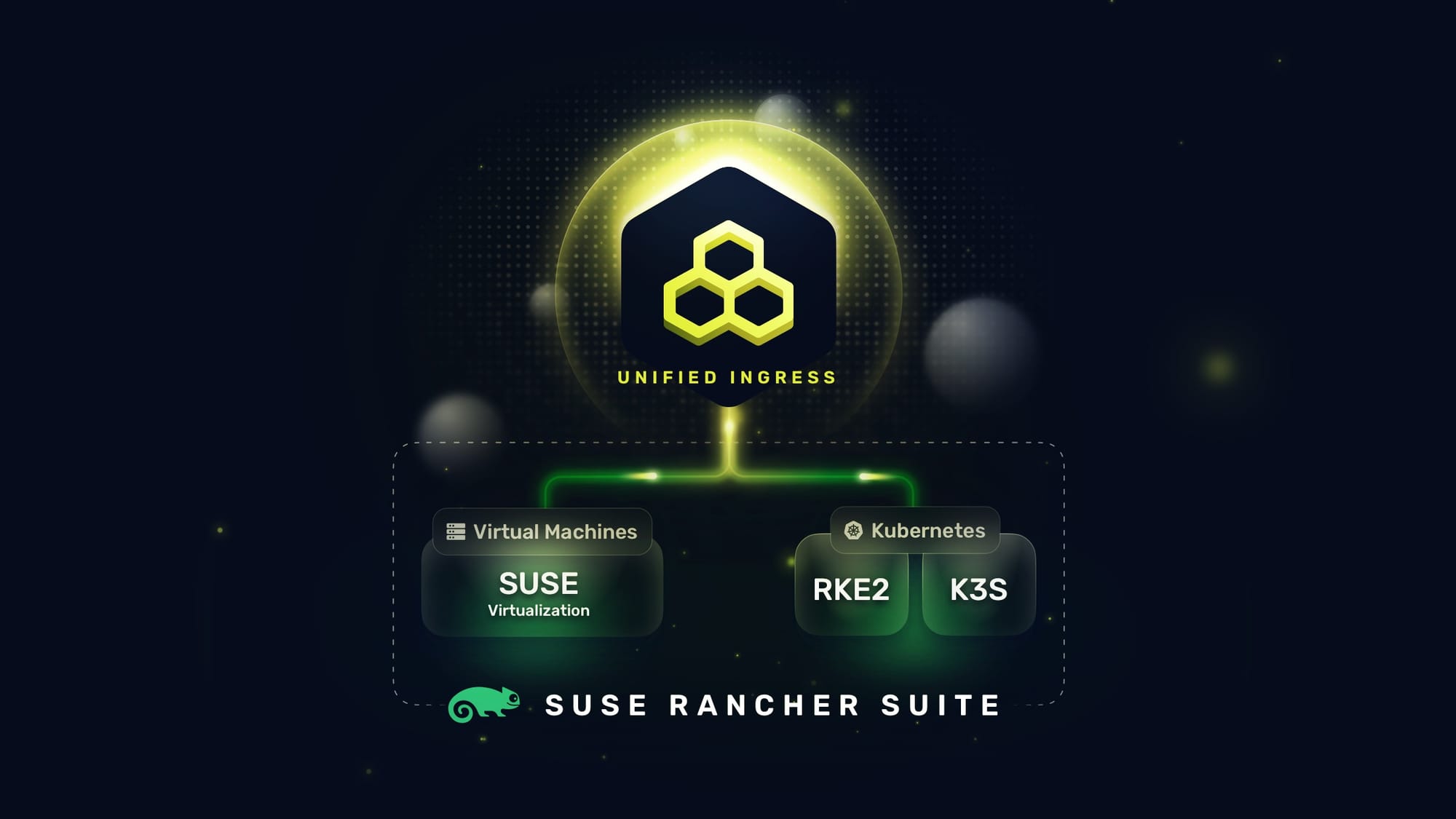

SUSE Rancher Prime is the unified management control plane for both estates. It manages Kubernetes clusters (RKE2 and K3s) and SUSE Virtualization (Harvester) VM clusters from a single interface and API surface. Platform teams operating mixed environments do not need separate consoles for virtualization and Kubernetes.

SUSE Virtualization (Harvester), the VM platform, is itself built on Kubernetes. It runs RKE2 internally, and VMs are managed as Kubernetes objects via KubeVirt. This means VMs have a Kubernetes API surface: they can be declared as YAML manifests, exposed as Kubernetes services, and managed through the same GitOps workflows as containerized workloads. The current release of Harvester defaults to ingress-nginx. Traefik becomes the default ingress controller in Harvester v1.9, which is in active development. Once v1.9 ships, the Traefik application delivery layer will be consistent across both SUSE estates by default, with no additional configuration required.

On the Kubernetes estate, RKE2 defaults to Traefik from v1.36, and K3s has defaulted to Traefik for years. Together with the Harvester v1.9 roadmap, this means Traefik is converging as the standard ingress and application delivery layer across the entire SUSE estate, from edge K3s clusters through enterprise RKE2 to Harvester-managed VM infrastructure.

The Gateway Layer: Traefik Hub Across Both Estates

One of the consistent findings in analyzing application delivery across VMware customer segments is how wide the gap is. Across every segment reviewed, vSphere-only, VVF, and VCF with or without Avi, API gateway is absent, AI gateway is absent, and MCP governance is absent or limited to transport-layer session persistence. These gaps do not close when organizations migrate to SUSE. They close when organizations deploy Traefik Hub. The Triple Gate architecture addresses all three with a single deployment, a single control plane, and a single audit surface, applied uniformly to both the VM and Kubernetes estates.

API Gateway

The API governance gap is older and deeper than most organizations realize. A typical VMware estate routes API traffic through NGINX or F5 without any catalog of what APIs exist in production, no record of which consumers are calling which endpoints, no deprecation workflow, and no API-level access controls. When organizations begin migrating workloads, they frequently discover that their API surface is both larger and less understood than anyone believed. The migration moment is when the gap becomes operationally expensive rather than theoretically concerning.

Traefik's API Gateway closes this gap at the ingress boundary, before any backend on either estate receives a request. The Coraza WAF is a Go-native implementation of the OWASP Core Rule Set, the same ruleset used in ModSecurity-compatible deployments, but without the C/C++ data plane that regulators are asking vendors to move away from. It applies equally to traffic destined for VM-hosted services on Harvester and container-hosted services on RKE2. Authentication is enforced at the gateway: JWT validation, OAuth2 flows, API key management, and policy-based access control are all handled before the request propagates. Rate limiting operates at the consumer level: per user, per team, and per application, using token bucket or sliding window algorithms. API lifecycle management provides the developer portal, access plans, versioning, and deprecation controls that transform a collection of endpoints into a governed API platform.

The regulated industry argument is direct. PCI-DSS requires a WAF for card data environments. HIPAA requires access logging and breach notification capability. The combination of Traefik's API Gateway for north-south enforcement and SUSE Security's container security for east-west micro-segmentation provides the two-perimeter architecture that zero-trust frameworks prescribe: enforce at the boundary, enforce between workloads. The same deployment that handles API governance also closes the memory safety compliance gap, since Coraza is written entirely in Go.

AI Gateway

AI inference infrastructure creates two simultaneous problems that traditional ingress controllers were never designed to solve.

- The first is cost. GPU inference is expensive: a frontier model call that involves a long context window can cost orders of magnitude more than a typical API request, and an application making unconstrained inference calls can generate thousands of dollars in cloud spend within hours. Without a gateway, that spend is invisible until the invoice arrives.

- The second is risk. Model outputs can contain PII extracted from training data, prompt injection attacks can manipulate model behavior in ways that produce harmful or policy-violating responses, and models from different providers have different safety characteristics that need to be accounted for in regulated deployments.

Traefik's AI Gateway addresses both dimensions at the infrastructure layer. Multi-provider model routing with weighted failover involves defining a primary inference endpoint and a fallback chain. If the primary provider is rate-limited, unavailable, or over budget, traffic shifts to the next provider automatically, with no application code changes, no DNS updates, and no manual intervention. Token budget enforcement operates as a gateway policy: per-user, per-team, and per-application token budgets are enforced before inference happens. An HTTP 429 response is returned before GPU cycles are consumed, which means cost controls are enforced at the infrastructure layer rather than discovered after the fact through billing reconciliation.

Safety pipelines run as middleware in the request and response chain. NVIDIA Safety NIMs, purpose-built safety models covering PII detection, prompt injection detection, and content filtering, execute in parallel with the primary inference call. The safety check result can gate the response before it reaches the consumer. For organizations running AI workloads on NVIDIA GPU nodes in RKE2, this creates a coherent safety architecture: NVIDIA hardware for inference, NVIDIA safety models for guardrails, and Traefik as the orchestration and enforcement layer that applies those guardrails consistently across every inference endpoint on the platform.

MCP Gateway

The Model Context Protocol is the emerging standard for how AI agents call external tools: databases, APIs, file systems, shell interfaces, and internal services. The governance question MCP raises is different in kind from traditional API governance. A conventional API consumer is a deterministic program calling a known endpoint with known parameters. An MCP-connected AI agent is a probabilistic system whose tool calls are shaped by natural-language instructions, and whose behavior can be redirected by a sufficiently crafted prompt. The blast radius of a prompt injection attack scales directly with the breadth of tool access available to the targeted agent. An agent with unrestricted MCP access to production systems is, in effect, an insider threat that can be triggered from the outside.

Task-Based Access Control (TBAC) is Traefik's answer to this problem, and it operates at a different layer than anything in the traditional load-balancing market. The distinction is worth making precisely: Avi Networks announced MCP session persistence as a tech preview at VMware Explore 2025. Session persistence keeps the same agent talking to the same MCP server connection across requests: this is an L4 transport concern. TBAC defines what tasks an agent can perform, on what resources, under what conditions, at the application layer. A TBAC policy does not say "this agent can connect to this MCP server." It says, "this agent can call this MCP server to perform read operations on cluster configuration data, for clusters in the production namespace, at a rate not exceeding one hundred calls per hour, between 08:00 and 22:00 UTC." Every call outside those parameters is blocked and logged. Session persistence and task authorization are not competing capabilities; they operate at different layers of the stack and address entirely different problems.

The AI Assistant (aka “Liz”) in SUSE Rancher Prime illustrates the practical governance model. It uses MCP to call external tools to retrieve cluster state, diagnose anomalies, and execute remediation workflows. TBAC defines what the assistant can do: which data sources it can query, which cluster operations it can initiate, and under what conditions. A policy that allows the assistant to read monitoring data from a CMDB MCP server does not automatically allow it to write to a production configuration store, even if both are reachable MCP servers. Every tool call is logged: agent identity, tool called, parameters passed, response received, and timestamp, creating the audit trail that compliance teams require when AI is part of a production operational workflow. Critically, the governance layer is independent of the agent. Whether the deployment involves Liz, a third-party agent framework, or a custom-built pipeline, the same TBAC policies apply at the gateway without modifications to the agent itself. The control surface belongs to the platform operator, not to the agent vendor.

All three gates (API, AI, & MCP) run on the same Traefik Hub instance, activated with a single Helm chart upgrade from Traefik Proxy. No re-architecture, no migration of existing routing rules, no new deployment footprint. A platform team that deploys Traefik Proxy as the default ingress controller on RKE2 today has a direct path to closing the API governance gap, the AI cost and safety gap, and the agent authorization gap across both the VM and Kubernetes estates, through a single upgrade operation.

The VMware Exit: Traefik as the Application Delivery Constant

The most common entry point for this joint architecture in 2026 is not a greenfield deployment. It is a VMware exit.

A typical VMware estate runs F5 BIG-IP, NGINX, or HAProxy for load balancing and a standalone WAF from F5 or Cloudflare. Every one of those components is written in C or C++. The regulatory picture on this is no longer advisory. CISA's "Product Security Bad Practices" guidance set January 1, 2026 as the deadline for software manufacturers to publish a memory safety roadmap for any existing product written in memory-unsafe languages used in serving critical infrastructure. That deadline has passed. Any vendor supplying network-facing infrastructure to regulated environments that cannot produce a published memory-safety roadmap is now operating against explicit CISA guidance. A June 2025 joint NSA/CISA Cybersecurity Information Sheet reinforced the same position, identifying memory safety vulnerabilities as serious risks to national security and critical infrastructure. The EU Cyber Resilience Act adds a parallel European mandate. Every legacy application delivery component in the VMware ecosystem (F5, NGINX, HAProxy, Avi, and Envoy) has a C or C++ data plane. Traefik, written in Go, has no memory-safety debt to address. It is already compliant by design.

Both vSphere 7 (October 2025) and vSphere 8 (October 2027) are on fixed end-of-life timelines, with no standalone vSphere 9. VCF 9.0 removed general-purpose NSX load balancing from the base entitlement. The migration follows three stages, with Traefik as the constant across all of them:

Migrate (DAY 0)

Deploy Traefik in front of existing VMware workloads before any infrastructure moves. This replaces C/C++ tools with a single memory-safe Go binary and decouples application identity from infrastructure identity. SUSE VM Import controller migrates VMs from vCenter to SUSE Virtualization (Harvester). Traefik auto-discovers workloads at their new location because they are still exposed as Kubernetes services. Traffic continues to flow without any upstream configuration changes.

Modernize

As workloads are containerized on RKE2, Traefik can manage canary routing between VM-hosted and container-hosted services simultaneously. One policy set governs both estates. No big-bang cutover. RKE2 v1.36 arrives pre-integrated with Traefik, so new clusters join the same governance model immediately.

Transform

AI inference workloads on RKE2 with NVIDIA GPU nodes become a third estate behind the same gateway. Token-level rate limiting governs GPU resource costs. A single Helm chart upgrade from Traefik Proxy to Traefik Hub activates API Gateway, AI Gateway, and MCP Gateway across all three estates simultaneously.

The organizations that deploy Traefik on Day 0 of their VMware exit arrive at their destination with application delivery governance already in place, across VMs and containers alike.

Why Regulated Industries Should Pay Attention

For organizations in regulated environments, Traefik Hub provides a supported, sanctioned path to FIPS 140-2 compliance. The implementation uses BoringCrypto, a FIPS 140-2 validated cryptographic module derived from BoringSSL, compiled directly into the Go binary. This means FIPS compliance is not a configuration option or a wrapper: it is enforced at the cryptographic primitive level, covering TLS, JWT validation, API key handling, and HMAC operations. Organizations operating under FedRAMP, HIPAA, PCI-DSS, or EU CRA requirements can deploy Traefik Hub with a fully documented, auditable cryptographic baseline. Air-gapped deployments are supported through RKE2’s offline image tarball distribution, which bundles Traefik natively, combined with Traefik Hub’s offline activation model.

SUSE Security handles east-west security policy within clusters while Traefik handles north-south governance at the estate boundary. Together, they form the two-perimeter model that zero-trust frameworks require. This applies across both the VM and Kubernetes estates managed by Rancher Prime. For organizations that missed the January 1, 2026 CISA deadline for publishing a memory safety roadmap, the SUSE and Traefik migration is itself the roadmap: moving application delivery to a Go-based stack eliminates the class of vulnerability CISA is targeting, rather than scheduling a future plan to do so.

The Upgrade Path is the Commercial Insight

Every Kubernetes cluster on K3s or RKE2 already runs Traefik Proxy as the default ingress controller. Every Harvester VM cluster runs K3s with Traefik inside. Organizations already running Traefik Proxy across their SUSE estate are one Helm chart upgrade away from Traefik Hub, which adds API Gateway, AI Gateway, MCP Gateway, and full API lifecycle management with no changes to the underlying cluster or existing ingress resources.

For VMware exiters, the same logic applies from Day 0. Adopting Traefik before the first VM moves means the upgrade path to full AI governance is already established before the migration completes. No second integration project, no second vendor, no second control plane.

SUSECON 2026 and What Comes Next

SUSECON 2026 runs from April 20–23 in Prague. The theme is Shape Your Resilient Future, with tracks covering AI adoption, digital sovereignty, Cloud Native modernization, and the shift from legacy virtualization. The SUSE Sovereign Summit opens on April 20 and focuses on infrastructure independence and regulatory compliance. The VMware displacement motion that SUSE is leading into SUSECON is sovereign infrastructure by another name: open source, portable, auditable, not subject to the pricing decisions of a single acquirer.

The architecture described here is deployable today. Teams attending SUSECON can begin the first stage of the migration path before the conference ends.

Summary: The Three Waves are Converging

The joint Traefik and SUSE stack addresses VMware displacement, Kubernetes networking consolidation, and enterprise AI governance across both SUSE estates:

- Traefik is already the default ingress inside both SUSE estates: K3s inside Harvester (VM estate) and RKE2/K3s (Kubernetes estate), providing a consistent application delivery model across the entire platform

- A three-stage VMware migration path (Migrate, Modernize, Transform) with Traefik as the constant, replacing C/C++ application delivery tools with a memory-safe Go platform from Day 0

- SUSE Rancher Prime as the unified management plane for both VM and Kubernetes estates, with Traefik Hub as the unified governance layer in front of both

- A single Helm chart upgrade from Traefik Proxy to Traefik Hub activates API Gateway, AI Gateway, and MCP Gateway across all estates simultaneously

- A two-perimeter security model: Traefik for north-south governance, SUSE Security for east-west policy, across both VM and container workloads

- FIPS-certified, air-gap-ready sanctioned & supported images from Traefik Labs for regulated industry deployments

- A memory-safe, Go-based implementation that is compliant by architecture with CISA's January 2026 memory safety roadmap deadline (now passed) and with NSA, FBI, and EU CRA guidance, replacing every C/C++ component in the legacy VMware application delivery stack

Platform teams, CTOs, and CISOs navigating VMware exits, Kubernetes modernization, and enterprise AI adoption in 2026 are not choosing between stability and modernization. With this architecture, they are choosing both on a single platform, across every estate, with a single upgrade path.

Further Reading

- CloudBolt: "The Mass Exodus That Never Was: The Squeeze Is Just Beginning" (February 2026)

- Kubernetes SIG Network: ingress-nginx retirement announcement (November 2025)

- SUSE KubeCon EU 2026: RKE2 ingress migration to Traefik

- SUSE KubeCon EU 2026: Rancher Prime Agentic AI Ecosystem and MCP integration

- CISA Product Security Bad Practices: memory safety roadmap deadline January 1, 2026

- NSA + CISA joint guide: Memory Safe Languages, Reducing Vulnerabilities in Modern Software Development (June 2025)

- Traefik Hub FIPS Compliance documentation

About SUSE

SUSE is a global leader in enterprise open source software, across Linux operating systems, Kubernetes container management, Edge solutions and AI. The majority of the Fortune 500 rely on SUSE to provide resilient infrastructure, enabling IT leaders to optimize cost and manage heterogeneous environments. SUSE collaborates with partners and communities to provide organizations with choices to maximize their current IT systems and innovate with next-generation technologies across traditional on-premises to cloud native, multi-cloud to edge and beyond. For more information, visit suse.com.

About Traefik Labs

Traefik Labs is the creator of Traefik Proxy, the world's most widely deployed cloud-native application proxy with over 3.4 billion Docker Hub downloads and 62,000+ GitHub stars, and Traefik Hub, the integrated platform for API Gateway, AI Gateway, MCP Gateway, and API lifecycle management. Learn more at traefik.io.