Breaking the Monolith: How an API Gateway Turns a Risky Migration into a Controlled Journey

The monolith isn't the villain. It got you to production, handled your first million users, and shipped features when the team was small enough to fit in one room. But somewhere between the third deploy queue of the day and the incident where a payment change broke the notification system, the conversation starts: "We need to break this thing apart."

The instinct is to plan a big rewrite. Spin up a Kubernetes cluster, redesign the domain model, rebuild from scratch. Eighteen months later, the new system handles 30% of the old one's functionality, and the monolith is still running in production.

There's a better way. Instead of a big-bang rewrite, you migrate incrementally, routing traffic, testing in production, and shifting workloads one piece at a time. The key ingredient is an API gateway that gives you fine-grained control over where every request goes. That's the thesis of this post: traffic control is migration control, and the right gateway turns a risky migration into a series of small, reversible, low-risk moves.

We'll walk through the journey using Traefik Hub as the gateway, but the patterns apply broadly. What makes Traefik particularly well-suited is that it combines routing, load balancing, identity-aware traffic splitting, and multi-platform connectivity into a single platform. Which means the same gateway that handles your Day 1 pass-through can handle your Day 3 or Day 100 canary deployment across VMs and Kubernetes. And as your architecture evolves beyond traditional microservices, the same gateway extends into Kubernetes Gateway API, AI model routing, and MCP, but more on that at the end.

The Gateway as the Starting Point

Before you break anything, you need a control plane for your traffic. If you don't have an API gateway in front of your monolith, that's step zero. If you have a basic load balancer, put Traefik in front of it or replace it entirely.

The setup is deliberately boring: point DNS at Traefik, configure a catch-all route that forwards everything to the monolith. Nothing changes for users. But now every request flows through a layer you own, and that layer is programmable.

This is the foundation. Every pattern that follows, strangler fig routing, canary deployments, and identity-based traffic splitting, depends on having this control point in place. It costs almost nothing to set up, it gives you options you didn't have before, and it works whether your monolith runs on Kubernetes, VMs, or bare metal.

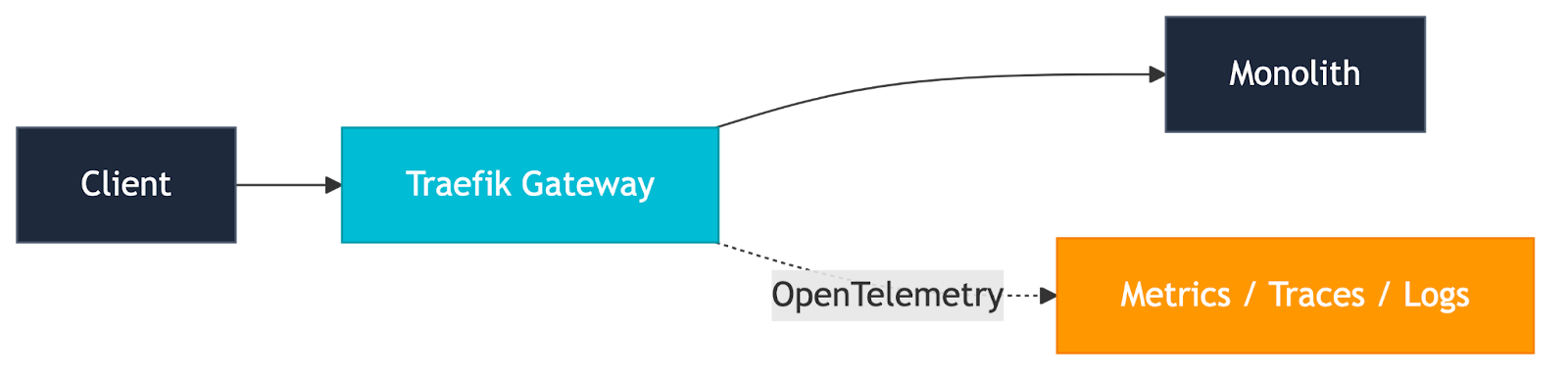

Observability: See Before You Cut

Here's something teams often overlook: before you break anything apart, you need to understand what you have. The moment all traffic flows through the gateway, you gain a single observation point for your entire application, and that's worth pausing on before you start extracting services.

Traefik Hub emits OpenTelemetry metrics, traces, and logs natively. That means the same gateway you just stood up as a pass-through is already producing data you can pipe into Dash0, Datadog, Splunk, Grafana, Dynatrace, or whatever your observability stack looks like. You don't need to instrument the monolith. You don't need to retrofit tracing into a codebase that was never designed for it. The gateway sees every request, every response code, every latency spike, and it tells you about all of it.

This matters for the migration because it gives you a baseline. Before you extract a single service, you know: which endpoints get the most traffic, what the latency distribution looks like, where errors cluster, and which paths are candidates for extraction. You're building the dashboards and alerts before the migration, not scrambling to add them after something breaks.

It also pays off at every subsequent stage. When you start canary deployments, you're comparing the canary's error rate and latency against a baseline you already have. When you do A/B testing, you're measuring real business metrics through the same pipeline. When you shift traffic with identity-aware routing, you can see exactly how internal users experience the new service versus how everyone else experiences the monolith. Observability turns every migration decision from a guess into a data point.That visibility at the gateway is the foundation, but it’s not the finish line. As you start extracting services, tracing needs to extend with the architecture. Instrumenting your microservices as they’re built lets you carry that context forward, turning edge visibility into true end-to-end distributed tracing. Requests no longer stop at the gateway; they can be followed across every service boundary, every downstream dependency, and every internal interaction. That’s when observability stops being a perimeter view and becomes a full system understanding.

Additional Reference: Metrics, Tracing & Logs configuring OpenTelemetry export in Traefik Hub.

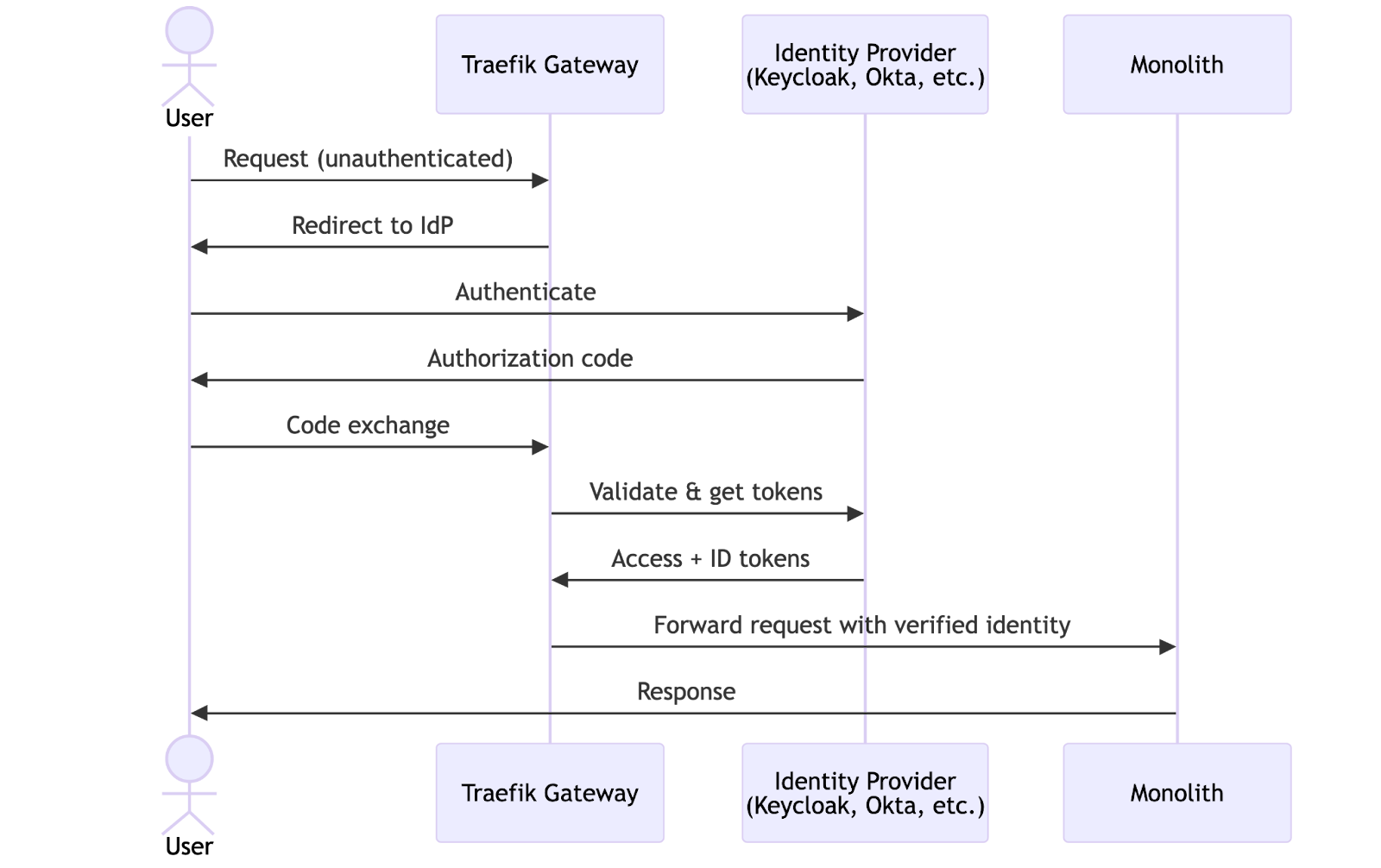

Centralizing Auth: Your First Win

With traffic flowing through the gateway, you get an immediate win that has nothing to do with microservices: centralized authentication.

Move auth to the edge. Traefik Hub supports OIDC and JWT middleware natively, so you can authenticate requests at the gateway before they ever reach the monolith. This means new services you extract later don't need to implement their own auth; the gateway handles it.

How you handle tokens downstream depends on your architecture. In a high-trust internal network, you might strip the token entirely and forward only the claim headers. In a zero-trust model, you forward the verified JWT to the backend so it can also make its own authorization decisions. Either way, the gateway becomes the centralized authentication point for all API traffic, ensuring that every request is consistently authenticated before reaching your services.

This matters for the migration story because the identity information flowing through the gateway is exactly what you'll use later to route specific users to new services. Setting up auth now pays dividends down the road.

Additional References:

- OIDC Authentication setting up OpenID Connect middleware in Traefik Hub

- JWT Authentication setting up JWT middleware in Traefik Hub

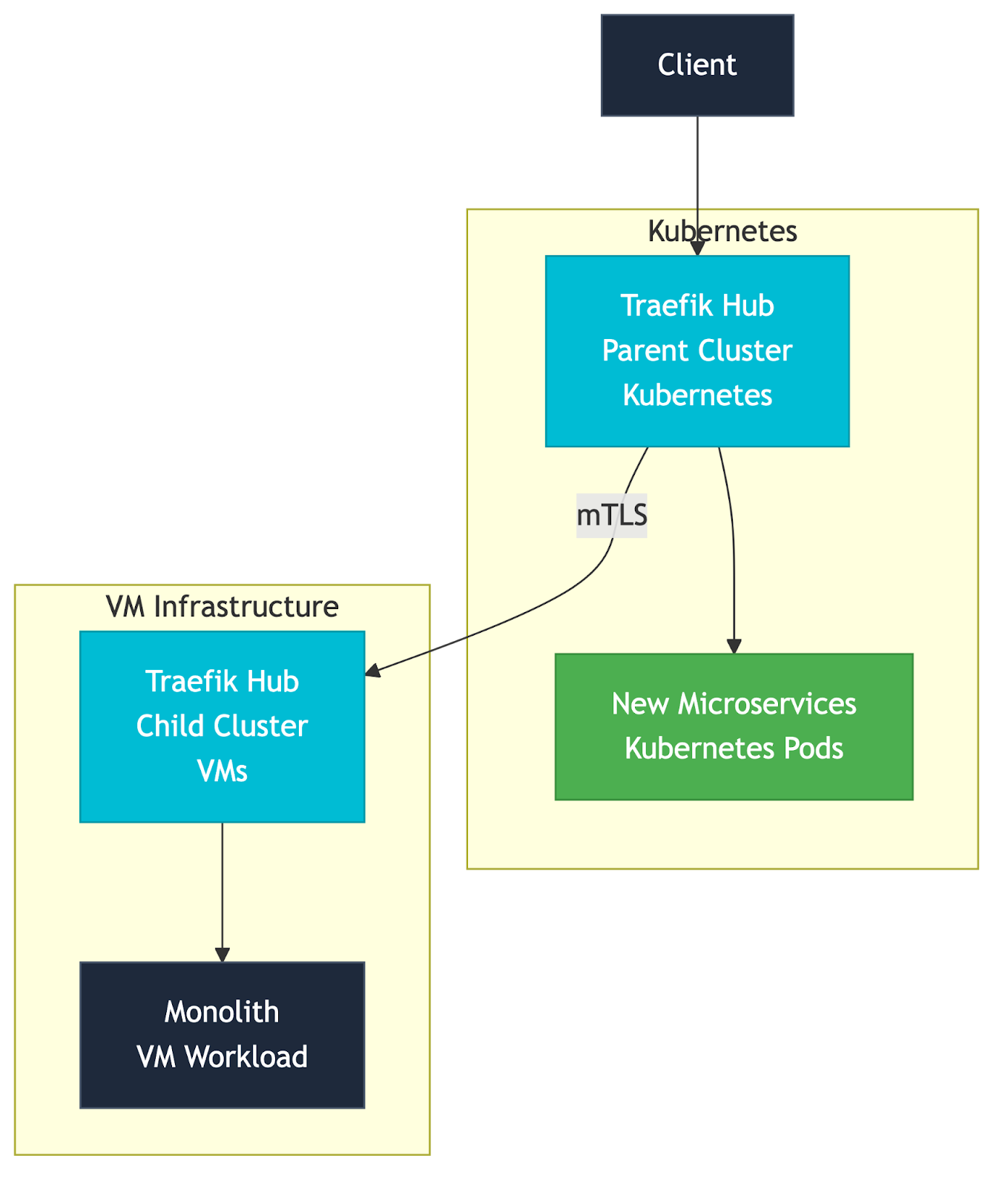

Bridging the Infrastructure Gap: No Re-Platforming Required

Before you start extracting services, there's a common blocker worth addressing: "Our monolith runs on VMs. We can't do any of this until we re-platform onto Kubernetes." That's wrong, and getting this piece in place now means every pattern that follows works regardless of where your monolith lives.

Traefik Hub's multi-cluster capability bridges VMs and Kubernetes natively. The architecture is straightforward:

A Traefik instance in Kubernetes acts as the parent cluster and the entry point for all traffic. A Traefik instance on the VMs (in the same network as the monolith) acts as a child cluster, advertising the monolith's workloads via uplinks. The parent discovers these workloads automatically and routes traffic to them. The connection between parent and child is secured with mutual TLS, and both sides verify each other against a trusted CA.

With this bridge in place, every pattern that follows, strangler fig routing, mirroring, canary deployments, and identity-aware routing, works across the VM-to-Kubernetes boundary. You can route specific paths to a new microservice in Kubernetes while everything else stays on the VM monolith. You can canary between the two, split traffic by identity, or mirror requests across the infrastructure divide. The gateway abstracts the boundary, and you migrate workloads at whatever pace makes sense for your team.

As confidence grows and services move to Kubernetes, you adjust the uplink weights. The VM cluster handles less and less traffic. Eventually, the monolith's uplink weight drops to zero. The fig has fully grown.

Additional Reference: Multi-Cluster Traffic Distribution setup guide for parent/child clusters, uplinks, mTLS, and cross-cluster routing patterns.

The Strangler Fig: Carving Out Your First Service

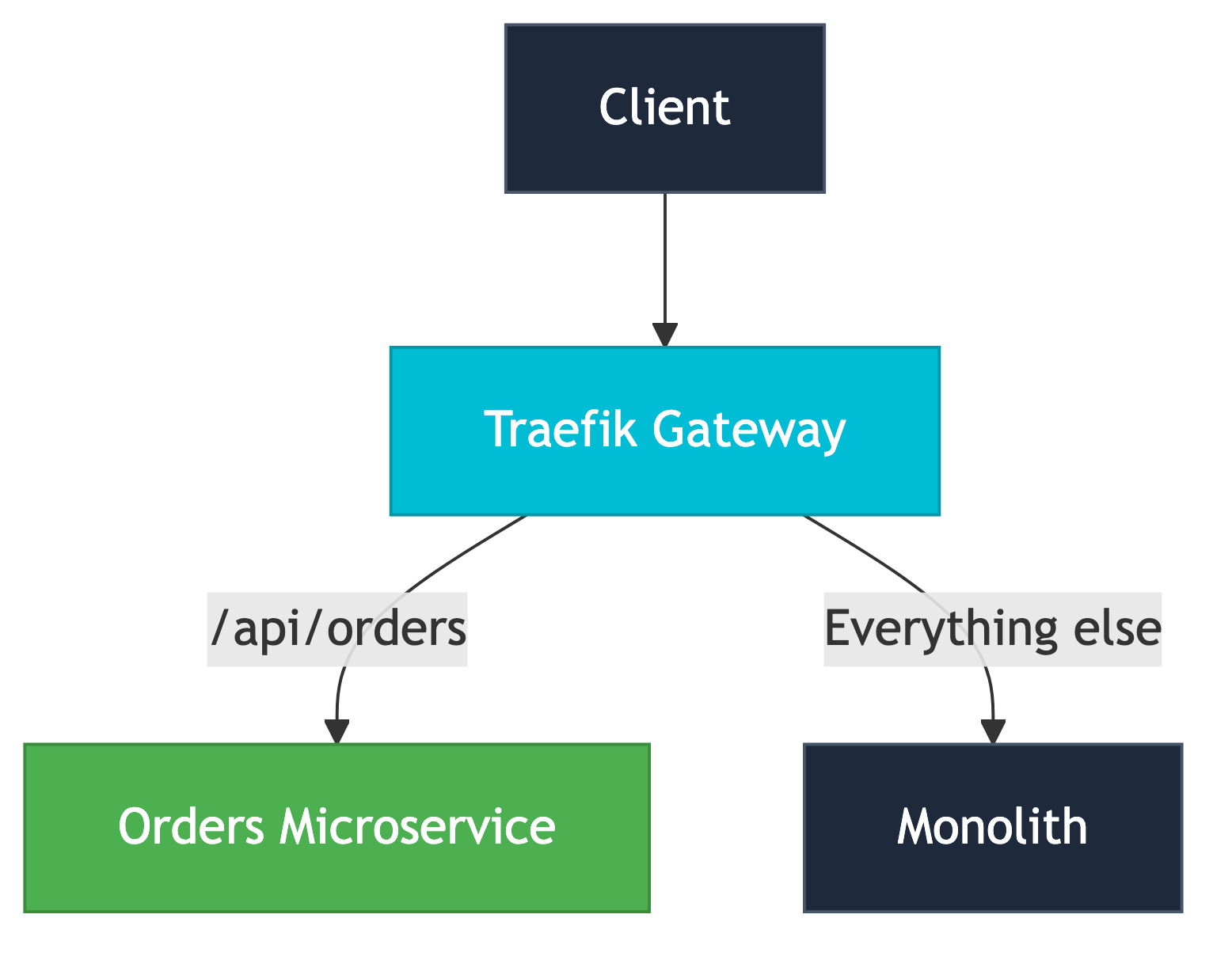

Now the actual decomposition begins. The pattern is called the strangler fig, after the tropical plant that grows around a tree and gradually replaces it. You don't rewrite the monolith, you intercept specific routes at the gateway and redirect them to new services, one endpoint at a time.

Say your monolith handles everything at example.com. You've extracted the orders domain into a new microservice. At the gateway, you add a route: requests to /api/orders go to the new service; all other requests go to the monolith. Clients don't know anything has changed.

The beauty of this approach is that it's incremental and reversible. If the new orders service has a problem, you revert to the monolith. No redeployment, no rollback, just a routing change. Over time, you extract more domains: payments, notifications, and user profiles. The monolith shrinks. The fig grows.

But there's a question lurking here: how do you know the new service actually works correctly under real traffic? You don't want to route 100% of order requests to an untested service and hope for the best. That's where traffic splitting comes in.

Testing in Production Safely

The strangler fig tells you where to route traffic. But how do you know the new service actually works before you commit? You don't flip a switch, you build confidence in stages: first, you observe, then you compare, then you roll out. Traefik gives you a different mechanism for each stage.

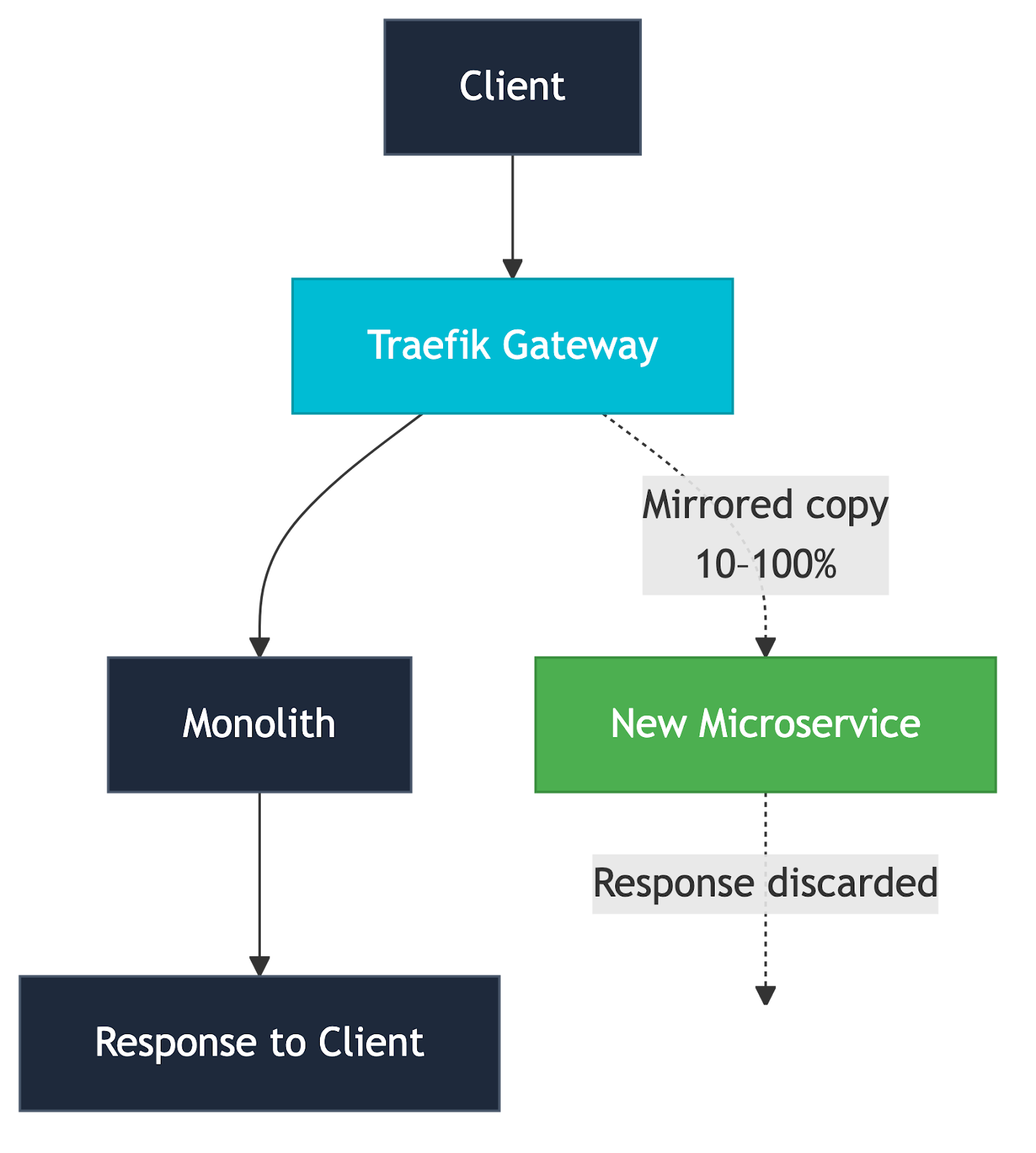

Mirroring: Zero-Risk Shadow Testing

The safest way to test a new service is to send it real traffic without affecting users. That's mirroring. Traefik copies a percentage of production requests to the new service but discards its responses; users only ever see the monolith's response. The new service processes real requests, real payloads, real edge cases, and you watch its logs and metrics for anything unexpected.

Since responses from the mirror are discarded, you won't see failures in your client-facing metrics. You need to watch the microservice directly, its logs, its traces, its error rates, and its latency. This is where the observability foundation you set up earlier pays off: the gateway's OpenTelemetry data gives you the monolith's baseline, and the microservice's instrumentation shows how it handles the same traffic. Compare the two. If the microservice is throwing errors on 2% of mirrored requests, you know exactly what to fix before any real user sees that service.

This is also where you catch the problems you'd never find in a staging environment: the malformed request from that one legacy client, the query parameter nobody documented, the header your integration partner sends at 3 am. Mirroring lets you discover it all without risking users. Start at 10%, ramp to 100% as the new service stabilizes.

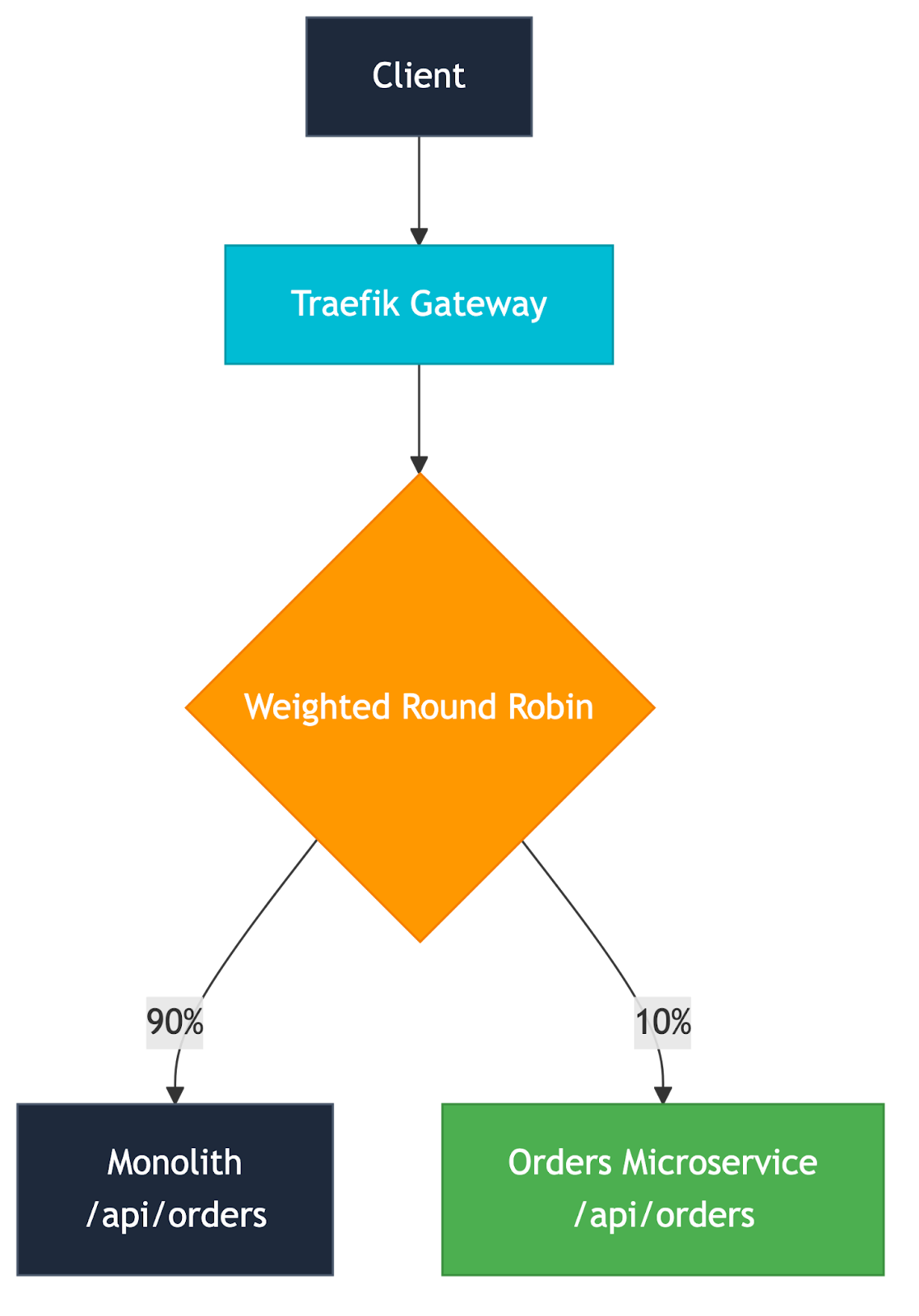

A/B Testing: Comparing Old and New

Once the new service handles mirrored traffic cleanly, you're ready to send it to real users. A/B testing splits live traffic between the monolith and the microservice using weighted round robin (WRR), so you can compare their behavior side by side.

Sticky sessions matter here. If a user's first request goes to the new service, their subsequent requests should too; otherwise, they'll get inconsistent behavior mid-session.

- Traefik supports cookie-based stickiness at the service level, so each user gets a consistent experience even during the split.

- For situations where cookies aren't an option, API clients, mobile apps, or sessionless connections, Traefik also supports Highest Random Weight (HRW) hashing, which deterministically routes requests to the same backend based on attributes like the client's IP address. Same consistency guarantee, no cookies required.

The intent of A/B testing is comparison: which implementation is better? You're gathering data to make a decision.

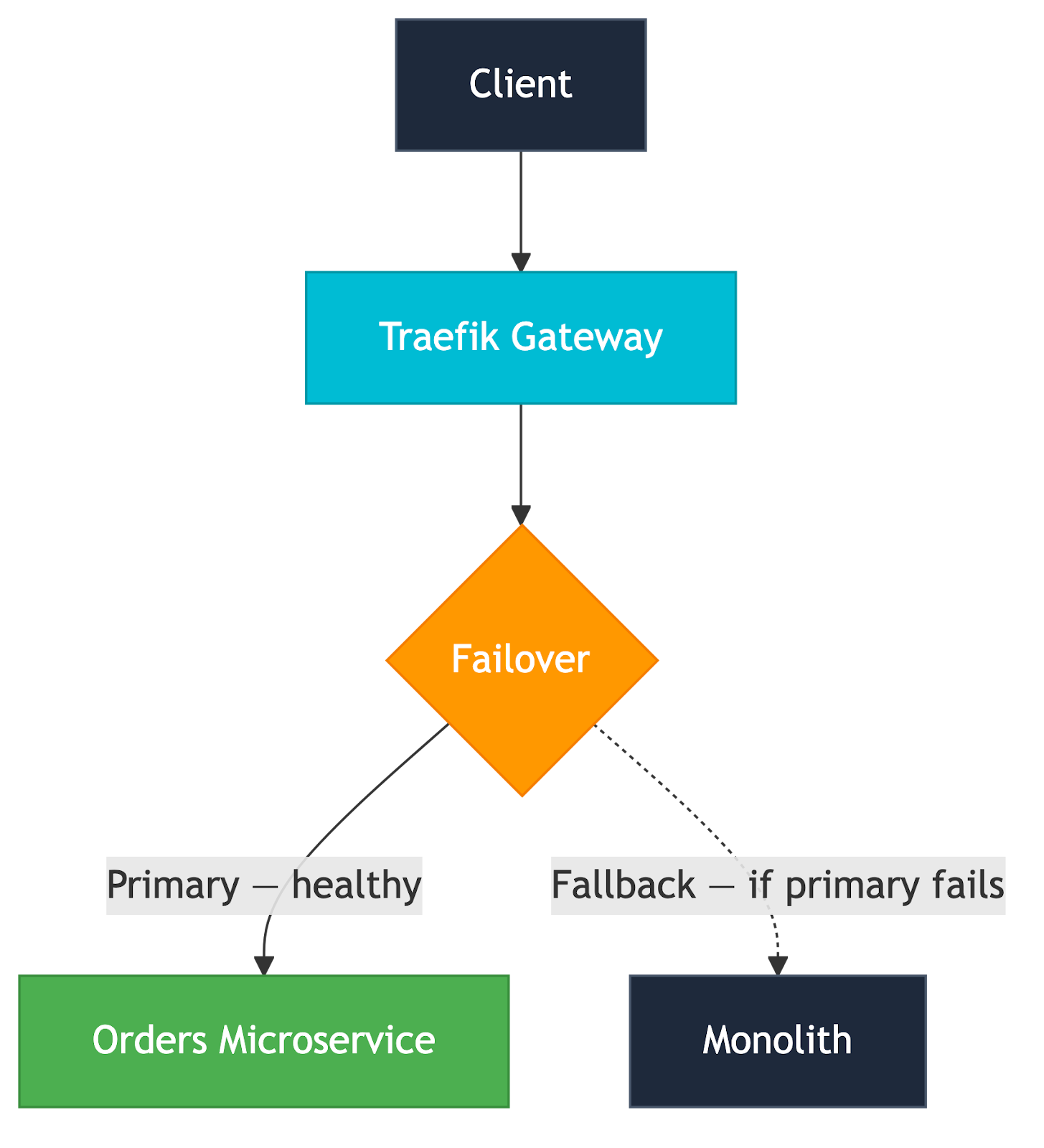

Failover: The Monolith as Your Safety Net

All the patterns above assume you're actively watching and making decisions. But what about 3 am on a Saturday? Failover gives you an automatic safety net: set the new microservice as the primary and the monolith as the fallback. If the microservice goes down or fails health checks, Traefik automatically routes all traffic back to the monolith. No human intervention required.

This is different from weighted round robin. WRR splits traffic intentionally; you're choosing to send some percentage to each service. Failover is binary: use the new thing, but if it breaks, the old thing is still there. During a migration, this means you can go to 100% on the microservice while keeping the monolith warm as a fallback. It's the confidence boost that lets you make the final cutover without holding your breath.

Circuit Breaker: Automatic Damage Control

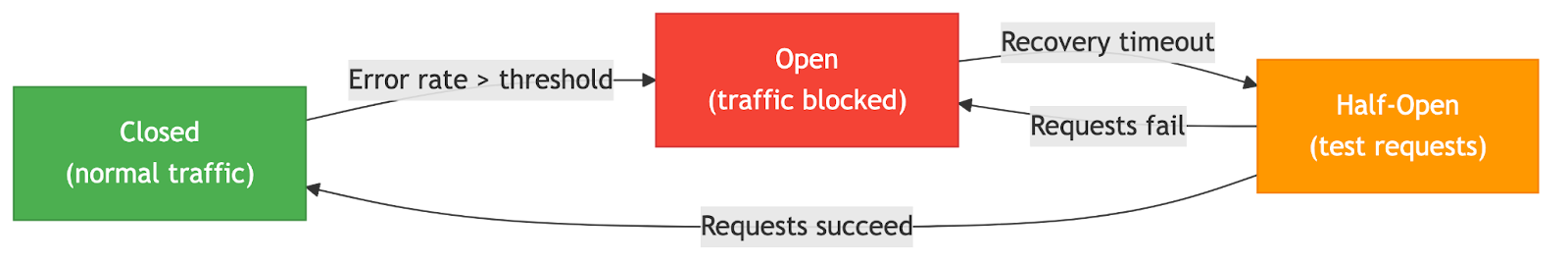

Failover handles the case where the microservice is completely down. But what about degraded performance, the service is technically responding, but 40% of requests are returning 500s? That's where the circuit breaker comes in.

Traefik's circuit breaker middleware monitors the service's error rate. When errors exceed a threshold you define (say, 50% of requests over a rolling window), the circuit "trips" and stops sending traffic to the failing service entirely. It periodically lets a few requests through to check if the service has recovered, and automatically closes the circuit once it has.

Pair this with failover, and you have a complete safety story: the circuit breaker detects degradation before it becomes an outage, and failover ensures traffic lands on a healthy node. During the migration, this combination means you can be aggressive about shifting traffic to new services, knowing that the system will self-heal if something goes wrong.

Canary Deployments: Shipping New Features Safely

It's worth noting that canary deployments aren't really a migration pattern; they're what you adopt after the migration. Once the orders microservice owns 100% of its traffic, every future release of that service uses canary: roll out v1.1 to 5% of users, watch the metrics, increase to 50%, then cut over. The same WRR mechanism that helped you compare monolith vs. microservice now helps you ship new features safely.

Health checks on the canary version gate each step automatically: if the canary fails its health check, Traefik stops sending traffic to it. No rollback deployment, just a weight shift back to 100% stable.

This is one of the compounding benefits of the gateway-first migration approach. The weighted routing infrastructure you built for the migration doesn't go away; it becomes your standard deployment strategy for every service you extract.

The full toolkit looks like: a mirror to validate with zero risk, an A/B test to compare monolith vs. microservice and decide which wins, a failover and a circuit breaker to catch problems automatically. Once the microservice owns its traffic, the same weighted routing mechanism becomes your canary strategy, same percentage splits, different purpose: now you're shipping new features safely, not choosing between old and new. Each pattern builds on the same gateway foundation, and every step is reversible.

Additional References:

Traffic Mirroring | A/B Testing | Canary Deployments configuration guides.

Identity-Aware Routing: Migrating by Who, Not Just What

Weighted round robin splits traffic randomly. But sometimes you don't want random, you want surgical. Instead of sending 10% of all users to the new service, you want to send specific users: your internal team, your beta testers, your most adventurous enterprise customers.

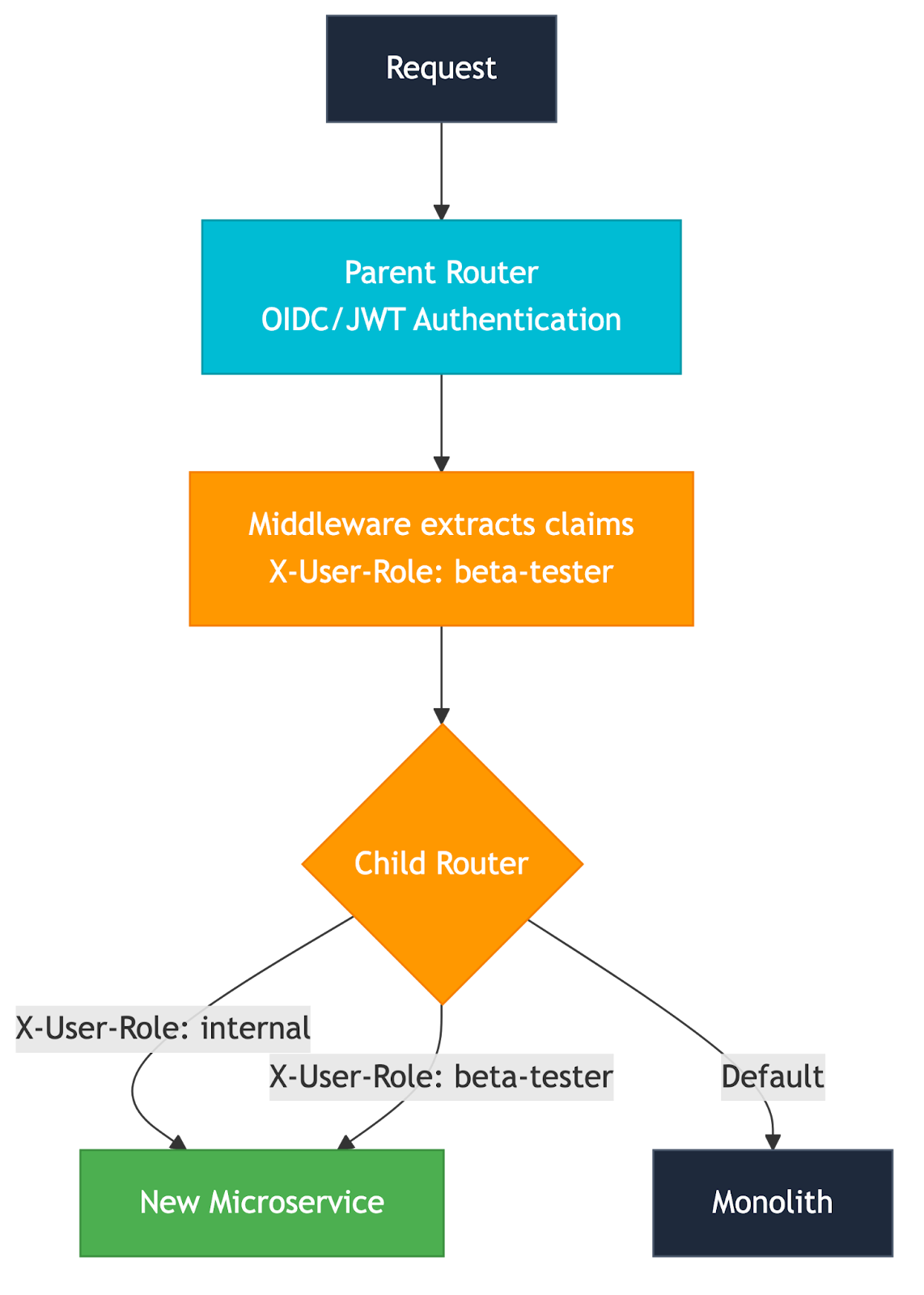

This is where Traefik Hub's multi-layer routing and identity integration come together. The idea is straightforward: the gateway already authenticates requests (remember the OIDC setup from earlier). The JWT claims contain information about who the user is, their role, their organization, and their tier. You use those claims to make routing decisions.

The mechanism is multi-layer routing. A parent router authenticates the request and extracts claims into headers. A child router matches on those headers and routes accordingly:

This gives you a migration strategy based on user segments. Internal team first, they're forgiving, and they file better bug reports. Then, beta users who opted in. Then a broader cohort. Enterprise customers on the monolith until you're absolutely confident. You control exactly who sees the new code, and you can change the targeting at any time without a deployment.

You can even combine this with a weighted round-robin: route 50% of beta users to the new service and 50% to the monolith. Identity routing for the who, weighted splitting for the how much.

Additional References:

Multi-Layer Routing | Identity-Based Routing identity-aware routing configuration and patterns.

The Triple Gate: API, AI, and MCP

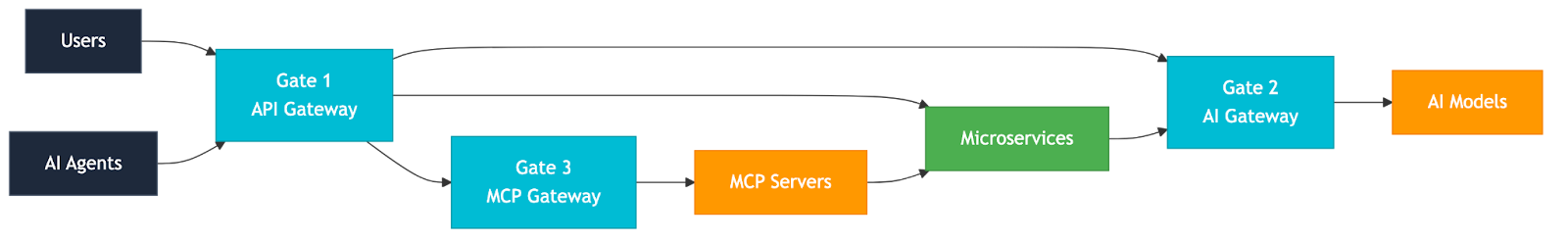

The migration from monolith to microservices isn't the end of the journey; it's the first gate. The gateway you built to control your migration is the same gateway that controls what comes next, and what comes next is a three-gate architecture where a single platform governs all your traffic: services, AI models, and AI agents.

Gate 1: API Gateway. This is where you are now. This layer handles everything you've discussed throughout this post. Routing, authentication, observability, traffic splitting, and identity-aware decisions. Traefik Hub also supports the Kubernetes Gateway API standard, so as the ecosystem moves toward it, you have a native path to adopt it without re-architecting. This gate handles every request from users to services.

Gate 2: AI Gateway. Your newly extracted microservices will start calling LLMs. When they do, the gateway becomes the control plane for AI traffic too. Traefik Hub's AI Gateway routes requests to different model providers, enforces token-based rate limiting, applies content guardrails, and caches responses, all at the gateway layer. The same infrastructure that canary-tested your orders microservice now canary-tests your switch from GPT-4 to Claude. This gate handles every request from services to models.

Gate 3: MCP Gateway. The Model Context Protocol (MCP) is the emerging standard for connecting AI agents to tools and data sources. As your microservices become the tools that agents call, the gateway needs to understand MCP's stateful, session-based communication patterns. Traefik Hub's MCP Gateway routes agent-to-tool traffic, enforces tool-based access control, and maintains session affinity across requests. The services you extracted from the monolith become first-class tools in the agent ecosystem, and the gateway governs access to all of them. This gate handles every request from agents to tools.

The thread connecting all three gates is the one we started with: traffic control is migration control — and it doesn't stop at microservices. The same gateway that manages your monolith decomposition manages your AI model routing and your agent tool access. One platform, three gates, every request.

The Migration Is a Journey

The monolith doesn't die overnight, and it doesn't have to. The worst thing you can do is treat the migration as a binary event, old system off, new system on. The best thing you can do is build the infrastructure that lets you migrate incrementally, test continuously, and roll back instantly.

The API gateway is that infrastructure. It's the control plane for your migration, and for what comes after. Start with a simple pass-through. Set up observability to understand what you have. Centralize auth. Bridge your VMs and Kubernetes so the infrastructure is ready. Extract your first service behind a strangler fig route. Split traffic to test it. Use identity-aware routing to target specific user segments. And when you're ready, extend that same gateway through the triple gate, API, AI, MCP, into whatever your architecture becomes next. At every step, you're in control, and at every step, you can stop and come back to it later.

The monolith took years to build. Give yourself permission to take time to break it apart safely.

Every pattern in this guide, weighted routing, mirroring, canary releases, and load-balancing strategies, is available in Traefik Hub today. To try them on your own cluster, get started with Traefik Hub.