The demands of modern businesses, especially around scalability, velocity, and agility, underpin the reasons that microservice architectures are replacing monolithic applications.

The accelerated rate of microservices adoption, coupled with the continuing increase in commercial API integrations, gave birth to a new set of needs related to an organization's API architecture. The concept of an APl gateway was created to address these needs.

Let's take a closer look into what an APl gateway is and what purpose it serves in a microservices architecture, as well as some tips and tricks to keep in mind when implementing one.

What is an API gateway and how is it different from a reverse proxy?

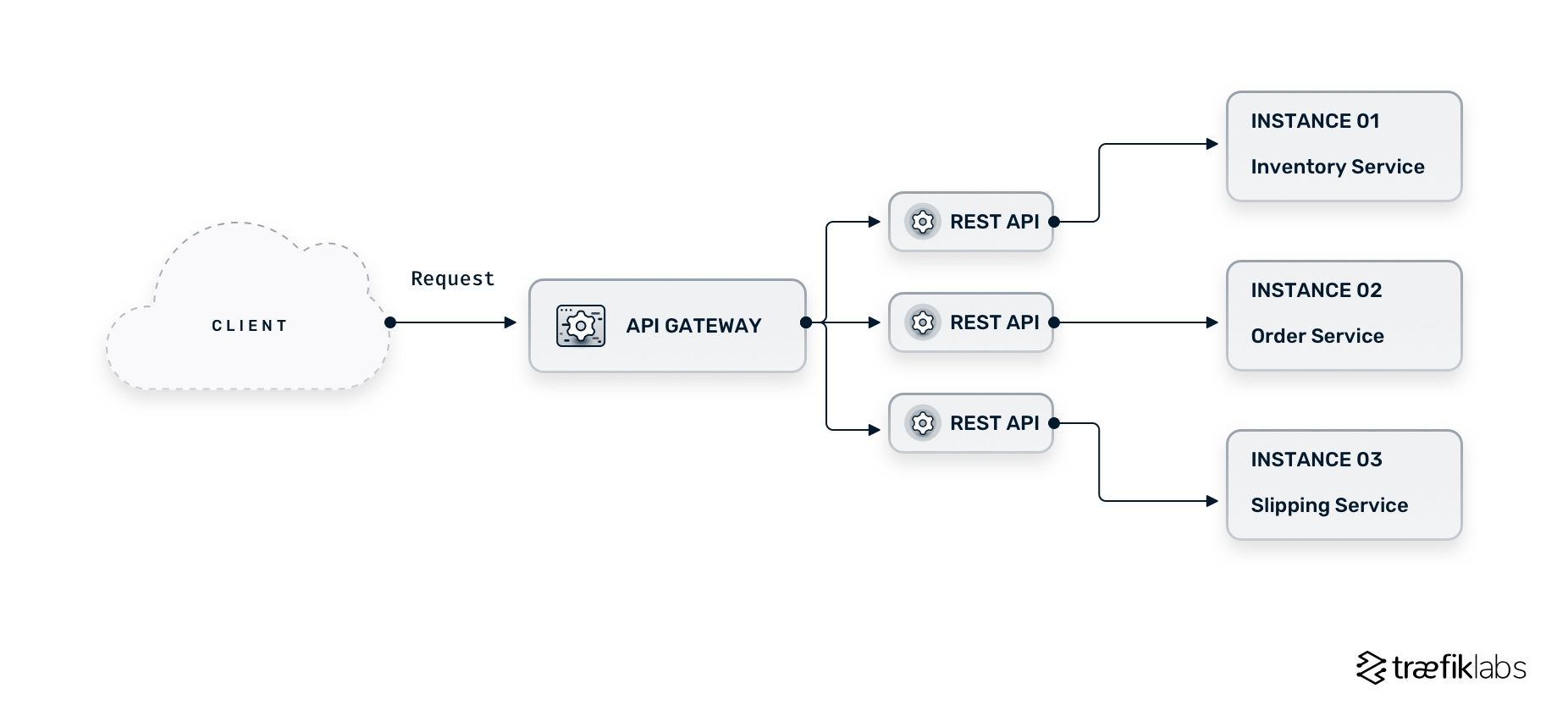

An API gateway is deployed at the edge of your infrastructure and acts as a single entry point that routes client API requests to your backend microservices. At its core, an API gateway is a reverse proxy receiving incoming user requests and directing them to the appropriate service. The diagram below illustrates where the API gateway lives in a microservices architecture.

Although an API gateway includes many of the functions that you find in a typical reverse proxy (e.g., request routing, load balancing, caching, etc.), it also offers a unique set of additional features and functions. The API gateway provides custom APIs tailored to each service, encapsulating the internal architecture.

The primary difference between an API gateway and a reverse proxy is the ability to address cross-cutting, or system-wide, concerns. A concern is a part of the system's architecture that has been branched based on its functionality. A cross-cutting concern is a concern that is shared among a number of different system components or APIs. Here are a few examples of cross-cutting concerns:

- Configuration management

- Security

- Auditing

- Exception management

- Logging

API gateways come with a set of features that are specifically designed to address these cross-cutting concerns.

What are the key features of an API gateway?

There is a core feature set developers expect from an API gateway, focused around four main pillars:

- Security

- Authentication and authorization

- Traffic management

- Traffic observability

Some solutions also offer feature sets designed for API acceleration and team collaboration. The list below summarizes the features you most commonly find in API gateway solutions.

Security

- HTTP, HTTP/2, TCP, UDP, WebSockets, gRPC

- Protocol translation and management

Authentication & Authorization

- LDAP authentication

- JWT authentication

- oAuth2 authentication

- OpenID Connect (OIDC)

- HMAC authentication

Traffic Management

- Traffic mirroring

- Blue / Green & Canary deployments

- Stickiness

- Active health checks

- Middleware (circuit breakers, retries, buffering, etc.)

- Distributed In-flight request limiting

- Distributed rate limiting

API Traffic Observability

- Cluster-wide dashboard

- Distributed tracing (Jaeger, Open Tracing, Zipkin)

- Support for third-party monitoring platforms (Datadog, Grafana, InfluxDB, Prometheus, etc.)

Acceleration

- Caching

- GitOps workflows integration

Collaboration

- Developer portal with OpenAPI support

API caching

One of the key API gateway features worth exploring more, is API caching. API caching, or server caching, refers to storing the result of the request in a server application. API caching can help ease the burden of your infrastructure during traffic spikes, this way reducing latency and helping scale the performance of service-oriented applications.

The use of API caching highly depends on the needs of the application. Typically, small applications that don’t deal with many concurrent requests have little need for API caching. However, even in cases with no concurrent requests, caching can be helpful.

Consider the following scenario. You have a quoting system that includes a certain number of static parameters — for example, 5 parameters in total — and your users frequently request parameters 4 and 5. By implementing caching, your application stores the results of the common requests and doesn’t have to calculate them every time. A perfect example of this scenario would be a Blog site.

On the other hand, if you have a quotation system that constantly changes, even if you implement caching, the result of the request will constantly change as well, so the application cannot rely on the cache and has to calculate every new request.

Nonetheless, as an application scales and handles an increasing number of concurrent requests, by storing frequently accessed information, API caching can solve a few arising issues:

- Database response time

- CPU limitations

- Inconsistent response times

- Increased API bills

API gateway rate limiting vs. API gateway throttling

Although they are often used interchangeably, API gateway rate limiting and API gateway throttling are both techniques used to protect an API from overloading but they operate differently. While API throttling is implemented to slow down the usage of an API to prevent overloading, API rate limiting sets a limit on the number of requests and denies all requests that go beyond the said limit. More specifically:

- API throttling ensures the stability and availability of an API by temporarily limiting the number of requests an API can receive within a specific time frame. If incoming requests exceed the predefined limit, the API will start returning an error for a certain period of time.

- API rate limiting allows an API to continue functioning normally while limiting the number of requests the API can receive within a specific time frame. If incoming requests exceed the predefined limit, instead of returning an error, the API will simply reject the requests that exceed said limit.

API gateway vs. API management

While the API gateway is a piece of software deployed at the edge of your infrastructure, acting as the middleman between your application and a set of microservices, API management is a much broader concept that encompasses the entire lifecycle of an API that includes creating and publishing, documenting, monitoring, and securing APIs.

The platforms that provide API management, often come with built-in API gateways, alongside other features and functionalities including:

- API design, development, and testing

- API publishing and versioning to control the release cycle of API versions

- API analytics that keeps track of API usage, such as the number of requests, errors accrued, etc.

- API monetization that allows organizations to expose APIs to external parties and generate revenue

- API documentation and developer portal

- API security that provides access control features for APIs (i.e. authentication and authorization, security policies, encryption)

The benefits of an API gateway and why your microservices need one

The feature sets seen earlier go beyond addressing simple cross-cutting concerns that a reverse proxy wouldn't normally be able to handle. By centralizing processes and configuration, API gateways address the most common microservices issues — security and availability. Features like SSL and authentication and authorization overcome security issues in microservices architectures, while features like rate limiting, retry, and circuit breaker ensure availability for applications and services.

Implementing an API gateway for your microservices comes with a number of benefits:

- Expose and connect your services from the moment they're deployed, using any protocol, and automatically encrypt communications with TLS.

- Instantly and consistently secure APIs by offloading the complexity of authentication and authorization to the API gateway.

- Support enterprise application deployment at scale using advanced traffic management.

- Troubleshoot fast and gain better insights into the health of your microservices using real-time metrics and analytics.

- Use embedded caching to improve API response time.

- Use embedded developer portals to document your APIs.

- Monetize APIs with the help of an API gateway.

Things to consider when implementing an API gateway

API gateways benefit most microservices-based applications, but implementing one comes with a few notes of caution.

Don’t let an API gateway solution drive your architecture

With the rich set of capabilities offered in API gateways, it’s tempting to deploy features and configurations before they’re actually needed. An API gateway can serve an environment with 10, 100, or 1,000 microservices; however, depending on the size of the application, you don't always need to use the entire feature set. API gateway solutions should be used to help and improve your microservices architecture, not drive it.

Before you start adding authentication rules to every route or creating credentials and adding traceability for everything, take a step back and assess if the current state of your application really needs these functions. If you prematurely implement steps and configurations, you overflow the system with information of little value.

Just because the product can do something doesn't mean you need to use the feature. Most API gateways offer a common set of enabling features and a smaller set of niche features. Building your app around the niche features unnecessarily complicates the application.

Diligent logs and metrics can save you a lot of trouble

The impact on performance is a major concern when introducing an API gateway to the application architecture or to your team. One recurrent problem is the tendency to put the blame for any issues on the tool, especially when dealing with slow response time or request errors. This is why keeping metrics and logs is essential. If performance issues arise following the implementation of an API gateway, make sure your first priority is to properly analyze logs and metrics for the APIs themselves before moving on.

Don’t overlook API governance

Technical tools often provide everything you need to manage users and their rights over APIs, policies, etc, but the internal team and company organization is still crucial. And API governance is an integral part of the team and company organization. Lifecycles, intra and inter-organization of integrations, visibility, and security are only a few of the responsibilities under API governance.

Internally promote the adoption of an API gateway

Powerful tools like an API gateway are not always easily handled by teams. API gateways often add complexity to architectures with new behaviors, new processes, new questions, etc. This makes troubleshooting a bit more complicated.

Simply adding an API gateway in the current architecture is not enough — it's necessary to onboard teams and raise their awareness on new concepts. Providing training and helping manage the impact of change is key to a successful adoption process.

Summary

As microservices replace monolithic applications, the need for API gateway solutions will only increase. Investing in the right solution is one of the most important factors for a successful microservices adoption process.

API gateway features successfully address the most common issues of microservices architectures but come with the risk of creating bottlenecks. Properly identifying the problems you want to solve, as well as the appropriate features that will help you achieve that, is important for a smooth implementation and use of an API gateway.

References and further reading

- Reverse Proxy Explained: How It Works And When You Need One

- Traefik API Gateway for Microservices

- Unlock the Power of API Gateways in your Enterprise

- Getting Started with Traefik and the New Kubernetes Gateway API

- Kubernetes Ingress & Service API Demystified

- Eliminate Common API Gateway Challenges in Amazon ECS

- Acing Cloud Networking with Traefik Enterprise