The Five Levels of AI Sovereignty: A Maturity Model for Infrastructure Control

Not all sovereignty is created equal. Here's how to figure out where your organization actually stands, and where it needs to be.

We regularly talk to enterprises that tell us they have "sovereign AI infrastructure." Then we ask a few questions:

- What happens if your internet connection goes down?

- Where does your AI safety filtering actually run?

- If you wanted to move to a different cloud provider next quarter, how much would you have to rewrite?

The answers are usually uncomfortable. The "sovereign" infrastructure turns out to depend on a SaaS control plane in Virginia. The AI guardrails make an API call to a model hosted by a hyperscaler. The governance policies are configured in a vendor console that can't be exported.

This isn't sovereignty. It's a sovereignty theater.

The word has become so overloaded that it's nearly meaningless. Data residency gets called sovereignty. Hybrid clouds get called sovereignty. "We'll run the data plane in your VPC" gets called sovereignty. None of these are wrong, exactly. But they describe very different levels of control, and conflating them is creating real problems for organizations trying to make architecture decisions.

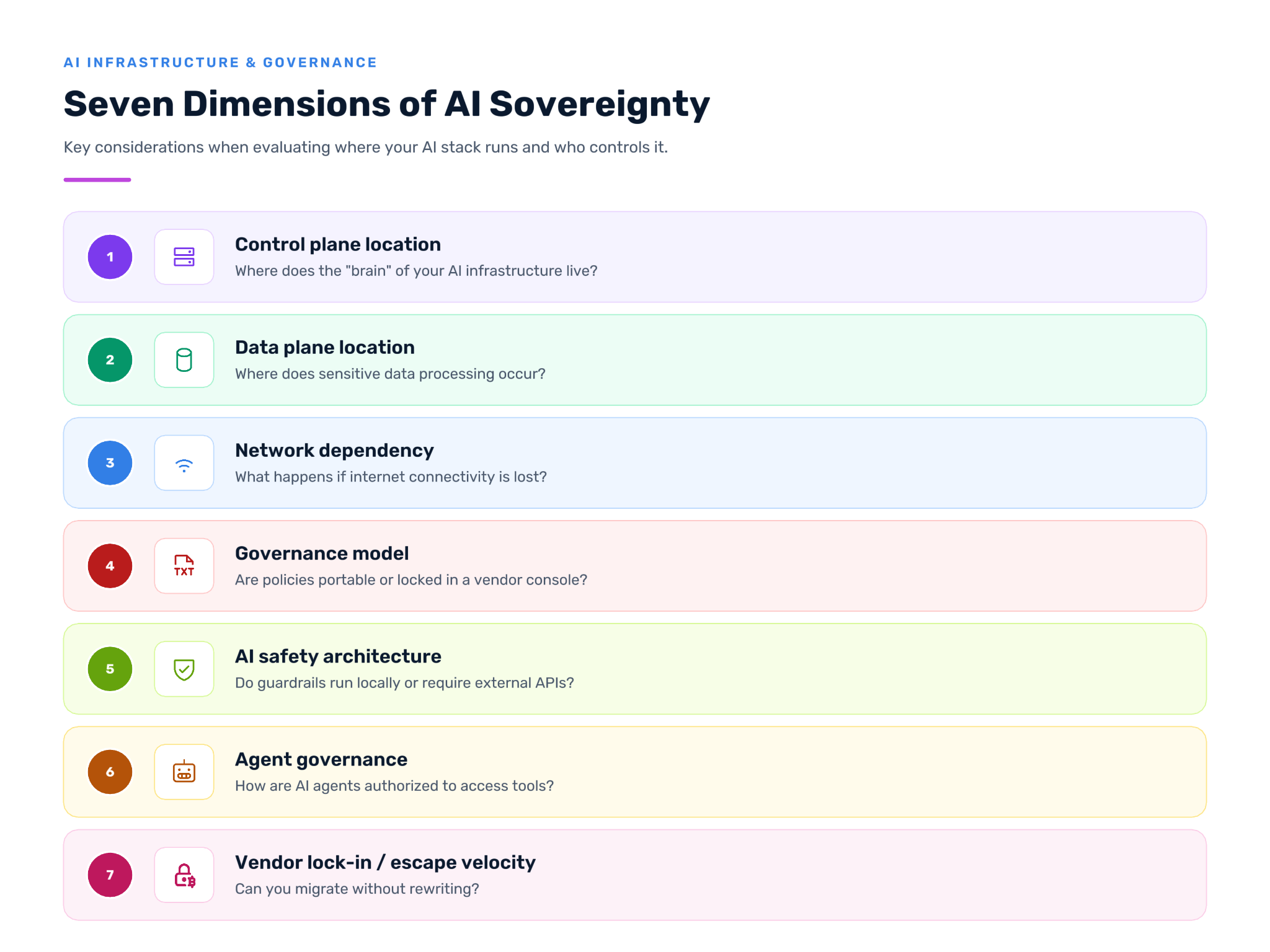

So we built a framework to make the distinctions explicit. We're calling it the AI Sovereignty Maturity Model. Two moving parts: five levels that describe what sovereignty looks like at each stage, and seven dimensions that show where to look when you're assessing your own architecture. It's not a sales tool, it's a diagnostic tool. We use it internally to understand where prospects actually are, and we're publishing it because the industry needs a common language for these conversations.

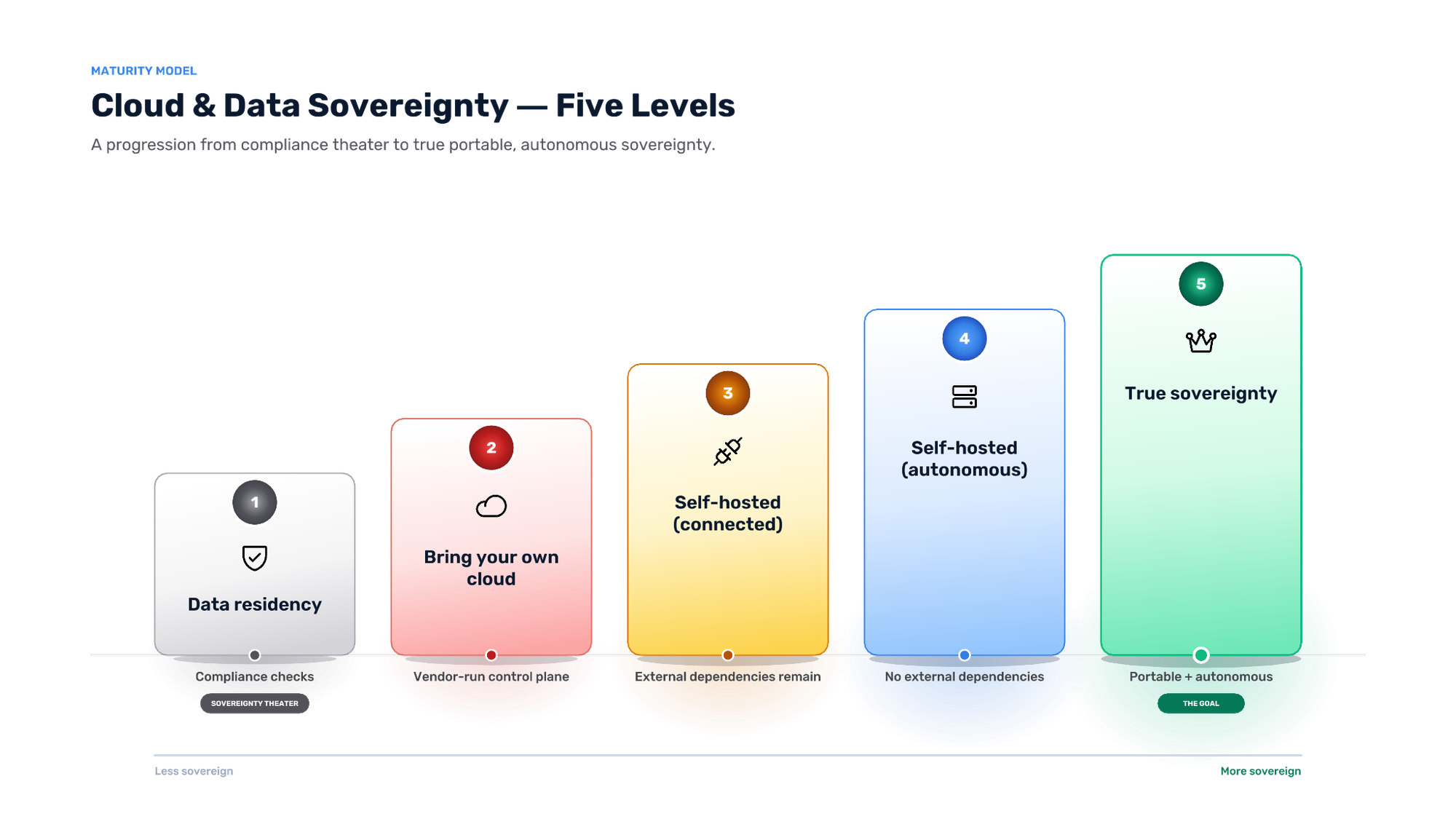

From Compliance Theater to True Control

The model defines five levels. Each one represents a meaningfully different relationship between an organization and its AI infrastructure.

Level 1: Data Residency

Data is stored in a specific geography. A Frankfurt data center. A US-East region. A specific availability zone that satisfies compliance requirements.

But the control plane managing that data lives somewhere else. The AI models processing queries make calls to external APIs. The policies governing access are configured in a vendor's cloud console.

Level 1 satisfies a checkbox, but it does not provide control. If the vendor has an outage, operations stop. If a geopolitical event disrupts connectivity, operations stop. If the vendor changes their terms or pricing, there's no recourse.

Most organizations that claim sovereignty are actually here.

Level 2: Bring Your Own Cloud

The data plane runs in the customer's environment. Usually their own AWS, Azure, or GCP account. This is a real improvement over Level 1. Cost economics are better because you're using your own committed spend and enterprise discounts. Latency is better because the data doesn't leave your environment for processing. Compliance posture is cleaner because your security team can apply their own controls to the data plane.

But the control plane is still external. The vendor operates the "brain" as a managed service. Configuration, orchestration, policy management, and often authentication all depend on that external connection.

This is where most "hybrid" and "BYOC" architectures land. It's a legitimate improvement over fully hosted SaaS. But it's not sovereignty. If the vendor's control plane goes down, your data plane keeps running on its last known configuration, but you can't update policies, push new routes, or respond to changing conditions. If the vendor decides to change the API, deprecate a feature, or raise prices, you're along for the ride.

Level 3: Self-Hosted (Connected)

Both the control plane and the data plane run on the customer infrastructure. This could be on-premises, in a private cloud, or in a customer-managed public cloud account. The customer operates the full stack.

But external dependencies remain. Licensing validation that phones home. Telemetry that gets sent to the vendor. AI safety checks that call cloud-hosted models. Software updates that require connectivity to pull.

Level 3 organizations have meaningful control over their infrastructure on a day-to-day basis. But they're not independent. An internet outage degrades operations. An air-gapped deployment isn't possible without punching holes in the egress firewall. The architecture has hidden connective tissue to the outside world.

We see many financial services organizations here. They want to operate on-premises for compliance, but their architecture still has dependencies that would fail an air-gap test.

Level 4: Self-Hosted (Autonomous)

The full stack runs on customer infrastructure with no external connections required. None.

Internet connectivity is optional. You can plug in for updates when you want them, but operations continue indefinitely without any vendor communication. Licensing works offline. Governance is policy-as-code that lives in your repo. AI safety systems (guardrails, content filters, jailbreak detection) run on local models rather than using cloud APIs.

If you pull the network cable, everything keeps working.

This is what defense and intelligence organizations require for classified workloads. It's increasingly what healthcare organizations need for sensitive AI processing. It's what any organization should want if they're serious about operational resilience.

Level 5: True Sovereignty

Level 4 capabilities, plus full portability.

This is the distinction that matters most and gets talked about least. An organization can be fully self-hosted and autonomous (Level 4) but still locked into proprietary formats, vendor-specific APIs, and architectures that can't be migrated without a rewrite.

Level 5 means escape velocity. This is what cloud native architecture was always supposed to deliver: the same configuration that runs in AWS runs on-premises. The same policies that work in your US deployment work in your EU deployment. If you decide to change vendors, or change clouds, or bring everything in-house, you can migrate your entire stack without rearchitecting.

You own the architecture, not just the deployment.

This is rare. Most vendors don't want you to have it because it eliminates lock-in. But it's the only level that provides true strategic optionality.

Knowing the levels exist is one thing. Knowing where your own architecture actually sits on them is another. That's the job of the seven dimensions. Each one is a place where sovereignty either holds or quietly fails, and assessing them is how you figure out your real level. There's also a rule about how they combine that catches most organizations off guard, but that comes later. First, the dimensions themselves.

Where Dependencies Hide

Each dimension matters on its own. The tables below show what different situations look like at different levels. The five-level definitions above remain the source of truth; these tables are shortcuts to help you locate yourself quickly. Find the row that describes your situation, and the right column tells you what level that puts you at.

1. Control Plane Location

Where does the "brain" of your AI infrastructure live?

This is usually the dimension where organizations overestimate their level. "We run on-premises" doesn't mean Level 4 if every authentication request routes through an external identity provider.

2. Data Plane Location

Where does the actual processing of sensitive data occur?

This is the dimension most vendors emphasize because it's the easiest to solve. BYOC architectures get you to Level 2 on this dimension. But it's only one of seven.

3. Network Dependency

What happens if your internet connection goes down?

This is the test we use most often. If the answer is "we'd have problems," that tells you where the hidden dependencies are.

4. Governance Model

How are policies defined, enforced, and audited?

This matters more than most organizations realize. If your governance can't move with you, you don't own it.

5. AI Safety Architecture

Where do guardrails and safety checks execute?

This is becoming critical. Organizations are deploying guardrails to satisfy responsible AI requirements, but those guardrails often introduce the very dependencies sovereignty is supposed to eliminate. If your jailbreak detection calls a cloud API, you've added latency to every request, expanded your attack surface, and potentially violated data sovereignty requirements.

We see organizations that are Level 4 on everything else fall to Level 3 here because their safety stack wasn't designed for offline operation.

6. Agent Governance

How are AI agents authorized to access tools and take actions?

This dimension didn't exist two years ago. Now it's arguably the most important.

The rise of agentic AI (systems that don't just generate text but take actions via protocols like MCP) breaks traditional security models. An agent might be authenticated, but that doesn't mean every action it takes should be authorized. Agents can hallucinate. Agents can be manipulated by adversarial prompts. Agents can explore solution paths you didn't anticipate.

The security model needs to shift from "authenticate once and trust" to "authorize continuously at the gateway." And that gateway needs to run on your infrastructure. If you're calling an external service to decide whether an agent can read a database, you've introduced latency, dependency, and metadata leakage into every tool call.

Most organizations haven't built this yet. The ones who have are differentiated.

7. Vendor Lock-in / Escape Velocity

Can you migrate your entire stack without rewriting?

This is escape velocity. It's what turns sovereignty from a compliance checkbox into strategic leverage.

When you have Level 5 portability, every vendor conversation changes. You're not negotiating from captivity. You're negotiating as a partner with options. That optionality has real monetary value in every contract renewal.

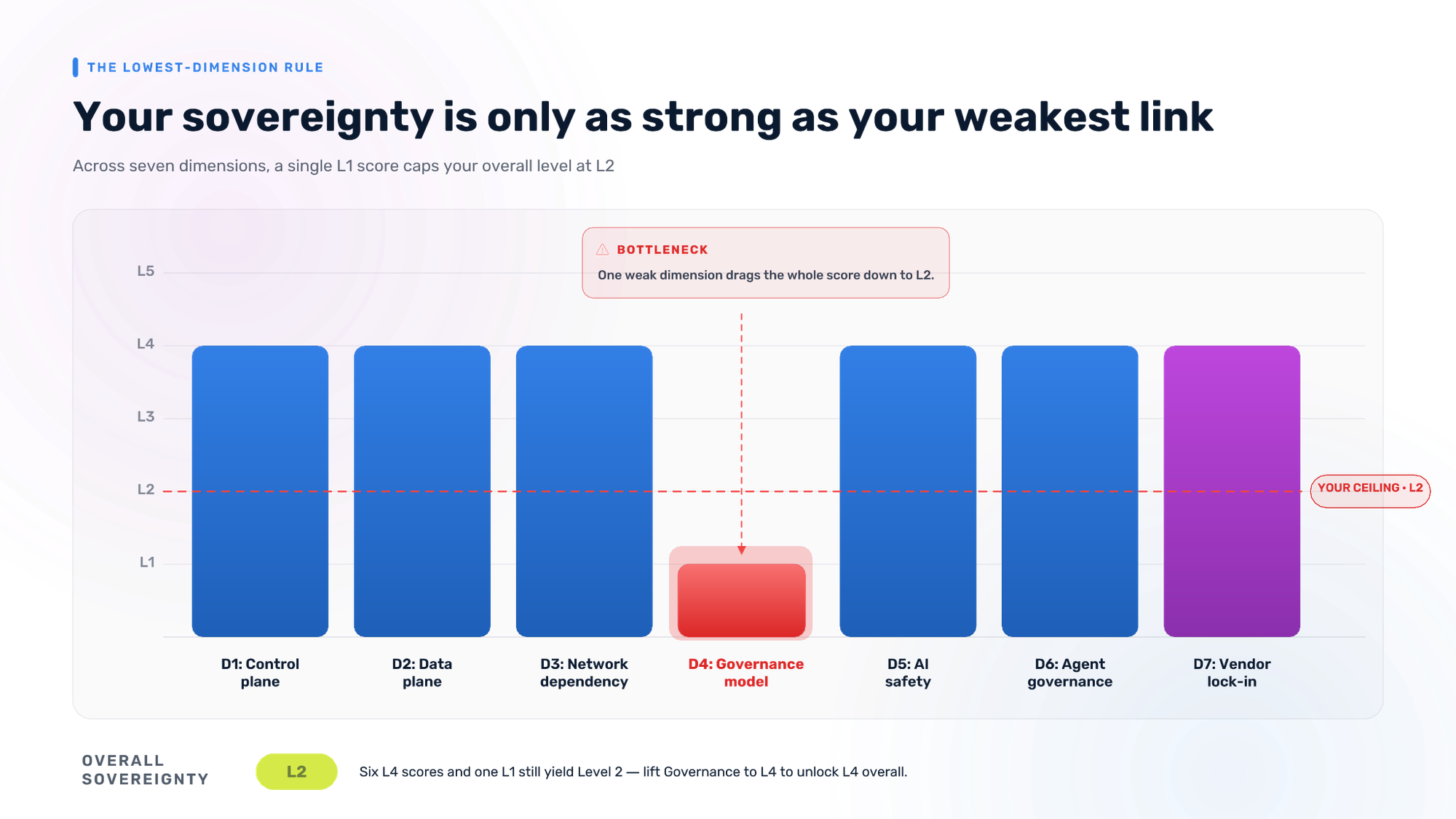

The Rule That Changes Everything

Now for the rule we promised earlier: your overall sovereignty level is determined by your lowest-scoring dimension.

An organization can be Level 4 on control plane location, Level 4 on data plane, and Level 4 on network dependency model. But if their AI governance model requires their policies to be written in the vendor’s console and cannot be version controlled, they're Level 2 overall. That single dependency defines their ceiling.

This is why "we run the data plane in your VPC" isn't enough. If the control plane is external, you're Level 2. If the safety checks are external, you're Level 3. If you can't migrate without rewriting, you're Level 4.

The model forces you to look at every dependency, not just the ones vendors want to talk about.

Assessing Your Position

Here's a simple worksheet. Rate your organization on each dimension. Be honest. The goal isn't to get a high score. The goal is to understand where you are and where the gaps are.

Note: Your overall level is your lowest dimension. If you're Level 4 on six dimensions and Level 2 on one, you're Level 2. There is no averaging. There is no partial credit.

What "Good" Looks Like by Industry

Different industries have different requirements. Here's what we see in practice:

Defense and Intelligence: Level 4-5 required for classified workloads. Air-gapped operation is non-negotiable. Most DoD contracts now explicitly require AI infrastructure capable of operating offline. ITAR and FedRAMP requirements are pushing even unclassified workloads toward Level 4.

Healthcare: Moving toward Level 3-4 for AI processing of PHI. HIPAA doesn't explicitly require it yet, but the Office for Civil Rights (OCR) guidance is trending toward "know where your AI runs." Organizations using AI for clinical decision support are increasingly uncomfortable with cloud-dependent architectures.

Financial Services: Level 3-4 is typical for production AI. The pattern we see most often: develop in the cloud (for speed), deploy on-premises (for compliance). This requires Level 4+ governance portability to avoid maintaining two separate configurations. Level 5 is becoming a competitive differentiator for firms that want to move fast without accumulating technical debt.

Public Sector: Varies widely by jurisdiction. EU sovereign cloud mandates are pushing toward Level 4. APAC data localization requirements are creating demand for Level 3+. The US federal government is increasingly Level 4 for AI workloads, especially post-executive order.

Enterprise (General): Most are Level 2, think they're Level 3, and should be planning for Level 4. The organizations moving fastest on AI are also accumulating the most sovereignty debt. Shadow agents, scattered API keys, ungoverned model access. At some point, the bill comes due.

Why This Matters Now

The AI governance conversation has shifted faster than most organizations realize.

A few months ago, boards were asking, "how do we adopt AI?" Now they're asking, "how do we control AI?" That's a different question, and it requires different infrastructure.

We're seeing it in every enterprise conversation. The initial inquiry is about capability: can you do AI gateway, can you do MCP governance, can you integrate with our LLM providers? But within a few minutes, the conversation shifts to control. Where does this run? What happens if the connection goes down? Can we operate this in our air-gapped environment? How do we avoid getting locked in?

The organizations that build for sovereignty now will have options. The organizations that optimize for convenience will spend the next three years explaining to auditors and boards why they can't answer basic questions about where their AI runs and who controls it.

The maturity model doesn't make the decision for you. But it makes the tradeoffs explicit. And in our experience, once executives see the tradeoffs clearly, they rarely choose Level 2 when they could have Level 4 or Level 5.

For the strategic argument behind why sovereignty is operating leverage (not just compliance), read AI Sovereignty: Why Control Is Your Ultimate Operating Leverage.