The CISO's Seven-Layer Cake: A Modern Defense Blueprint for the Frontier AI Era

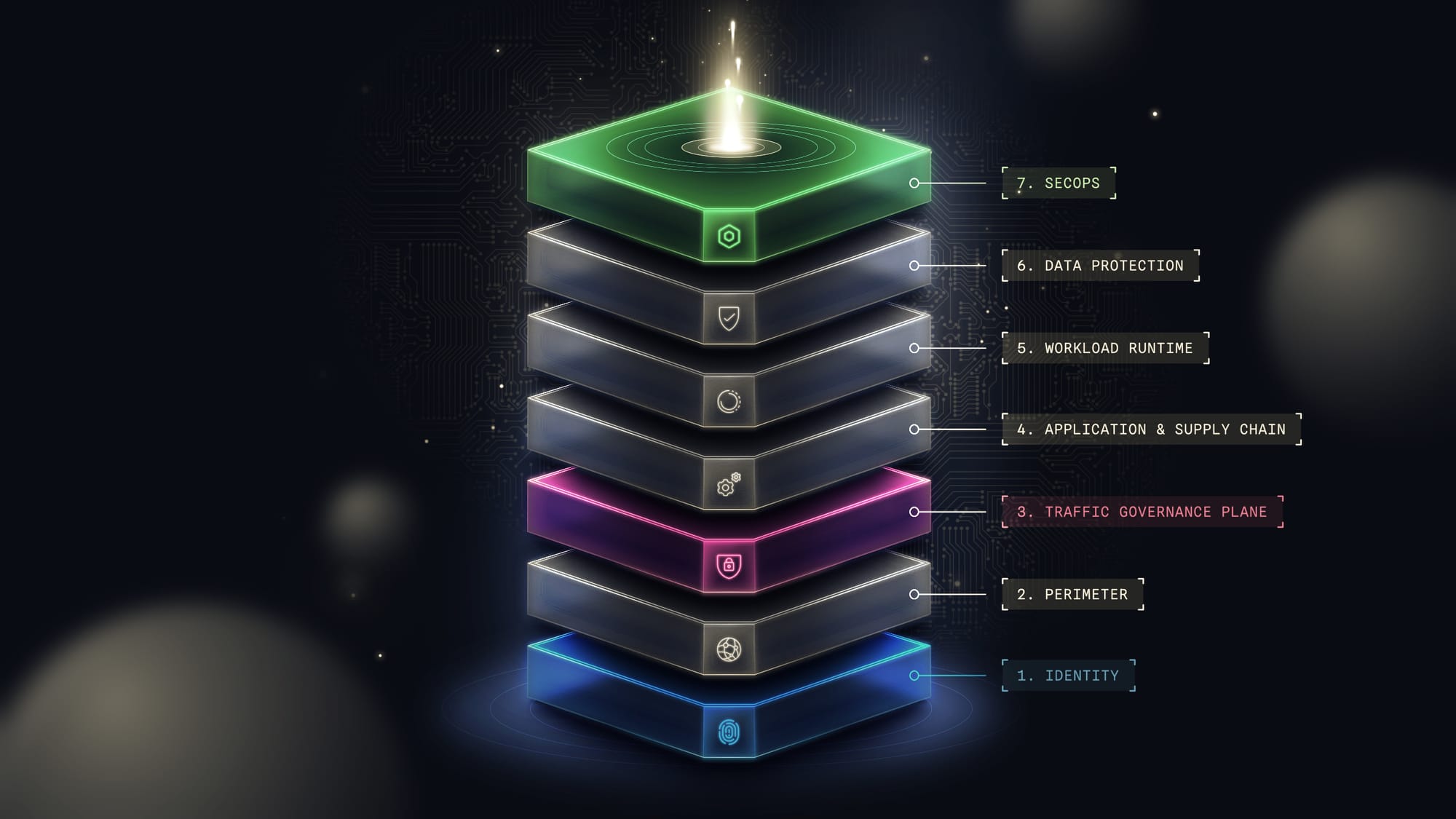

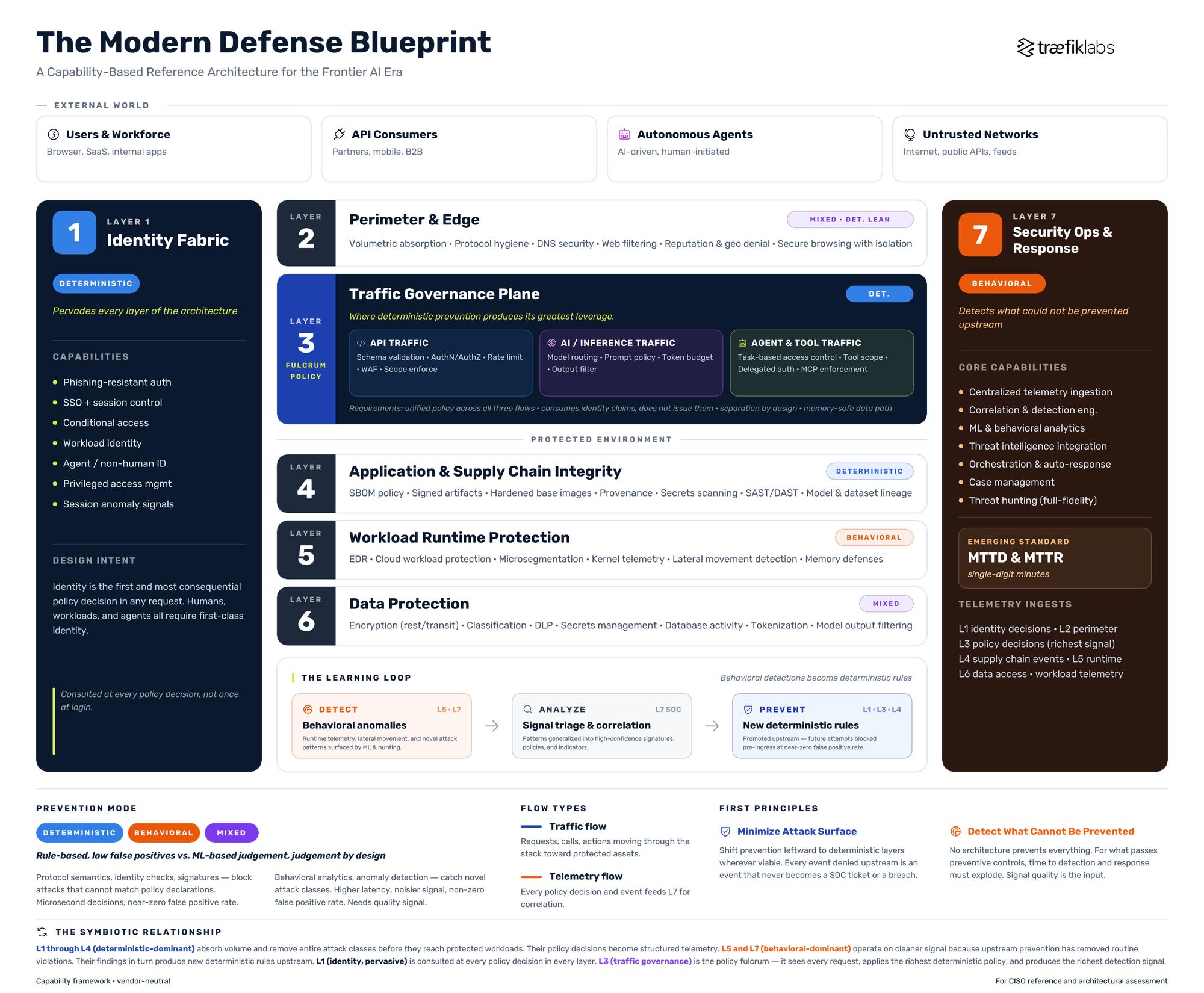

Modern enterprise defense is built in functional layers. Each layer has its own capabilities and its own prevention mode. Identity is the foundation on which every other layer depends. Traffic governance is the tier where policy does its heaviest work. Security operations sit at the top, correlating signals from everything beneath.

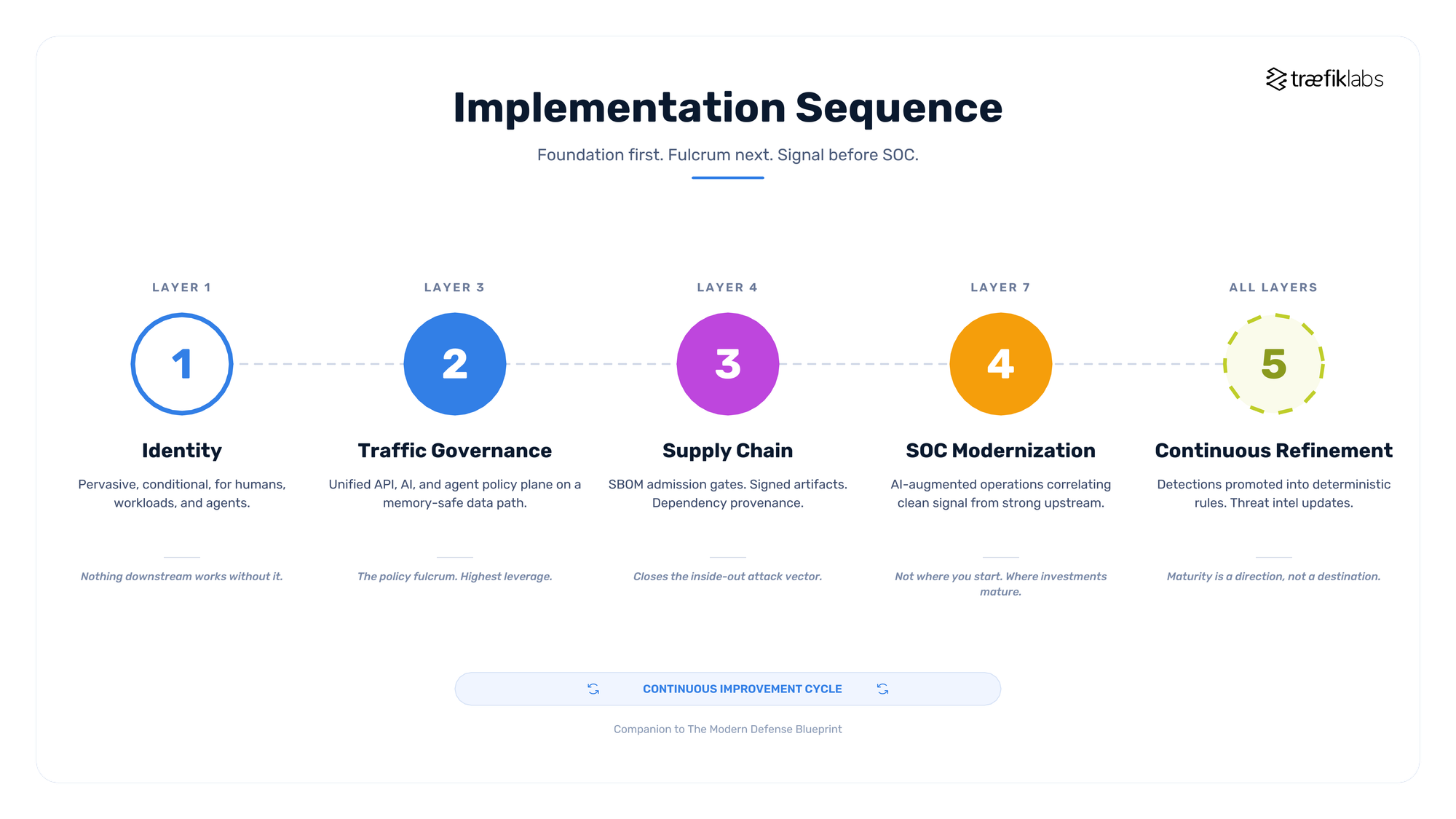

Below is an illustration of this layered defense:

The reference architecture that follows is the field guide for how these layers are actually deployed. No vendors are named. This is deliberate. The goal is a capability framework that 1) survives vendor churn and acquisition cycles and 2) that CISOs can use to assess any product against a consistent standard.

Why This Blueprint Exists

The economics of enterprise defense have shifted. Frontier AI models have collapsed the cost and time required to discover vulnerabilities, chain them into exploit paths, and execute autonomous attack sequences. What used to take a skilled team weeks now runs in minutes. Defender playbooks built around human-speed response assume a luxury the attacker no longer grants.

The Capability Asymmetry is Now Live

What was recently a forward-looking argument is now a live deployment. Multiple frontier AI labs have released cyber-capable models under divergent access philosophies. One approach concentrates the most offensive-capable model in the hands of a small coalition of roughly a dozen partners, on the explicit position that broader distribution is too dangerous at present. Another approach scales defender-focused cyber models to thousands of enterprises, on the premise that defenders fall behind if strong tooling is withheld. Both positions can be correct simultaneously. Both produce the same structural consequence: offensive capability has already outpaced the rate at which defender architectures can absorb it.

The proliferation window is short. Industry estimates suggest the time for offensive frontier-class capabilities to diffuse from the initial holders to the broader threat landscape at six to eighteen months. Enterprises outside the initial coalitions have that long to mature their deterministic layers before the asymmetry reaches their threat model. This blueprint treats that window as the clock against which architectural decisions should be measured.

On the defender side of the same window, the largest identity and security platforms are converging on a single architectural pattern: bundling credential issuance with traffic and tool-invocation enforcement under one control plane. The convenience is real. The architectural cost is the subject of this blueprint.

On the offensive side, three structural changes follow from this shift.

- The volume of attempted exploitation will rise by orders of magnitude, not percentages.

- The window between vulnerability disclosure and active weaponization will compress toward zero.

- Autonomous attack agents will operate at machine speed across the full kill chain, which means defender architectures that rely on human review at any critical point will be outpaced.

This blueprint answers that shift. It is organized around a small set of design principles, names the capabilities required at each layer of the defense stack, and describes how the layers work symbiotically to produce an architecture that is both economically rational and operationally defensible.

Design Principles

Principle One: Minimize the Attack Surface

Every component in the stack either reduces the attack surface or does not. Components that reduce surface earn their cost. Components that merely observe the surface are valuable but secondary. The primary architectural question at every layer is: what surface does this component remove, and at what cost per event?

Surface reduction happens in three ways:

- Policy-level reduction removes traffic, calls, or actions that violate declared rules before they reach anything of value.

- Structural reduction removes entire classes of vulnerability through design choices (memory safety in the data path, least-privilege defaults, network segmentation).

- Supply chain reduction removes the untrusted code, dependencies, and artifacts that expand the blast radius of any single compromise.

A surface that does not exist cannot be attacked. This is not a slogan. It is the only defense that scales against adversaries operating at machine speed.

Principle Two: Prefer Deterministic Prevention Over Behavioral Judgment

Where an attack class can be expressed as a structured policy decision, evaluated with certainty against structured inputs, it should be prevented deterministically. Where it cannot, behavioral approaches are necessary. Conflating these two modes is the single most common architectural error. Pushing as much prevention as possible into the deterministic mode is the single most consequential design choice in a modern defense architecture.

Deterministic prevention denies or allows based on rules that can be evaluated with certainty. The inputs are structured: protocol fields, identity claims, policy declarations, cryptographic signatures, declared schemas. The decision is a function of those inputs, not a probability. A request either carries a valid token with the required scope, or it does not. A container image either matches a signed, approved hash, or it does not. An agent either has the task-level authorization to call a given tool, or it does not.

Deterministic prevention has three properties that make it economically dominant where it applies. The false positive rate is near zero because the decision is not a judgment. The latency is microseconds because the evaluation is trivial. It requires no human in the loop because there is nothing to review. When an attack class can be reduced to a deterministic rule, preventing it at the wire costs effectively nothing per event.

The limitation is equally clear. Deterministic prevention cannot catch what its rules do not describe. Novel attacks, behavioral anomalies, and anything requiring contextual judgment fall outside its reach.

Behavioral approaches operate on patterns, statistical models, and machine learning classifiers trained on past attacker and defender behavior. They are necessary for the attack classes that cannot be reduced to rules: lateral movement, insider abuse, novel malware behavior, anomalous data access, and sophisticated social engineering. Their false-positive rates are non-zero by design, their latency is higher, and their efficacy depends on training data quality, model freshness, and telemetry coverage.

The two modes are complementary, not competing. Deterministic prevention handles the knowable and removes an enormous volume from the behavioral layer. Behavioral detection then operates on a smaller, cleaner signal where its higher cost per event is justified. An architecture that uses behavioral detection for problems that should have been prevented deterministically wastes analyst capacity on routine violations and starves the behavioral layer of the signal quality it needs to catch what actually matters.

Principle Three: Detect at Machine Speed What Could Not Be Prevented

No architecture prevents everything. Novel attacks, insider threats, supply chain compromises, and zero-day exploits will bypass preventive controls. For these, the measure of defense is time to detection and time to first response, both of which must operate at machine speed against machine-speed adversaries.

Detection is only as good as its input signal. An architecture that has minimized the attack surface through strong prevention, and that handles the knowable deterministically, produces detection telemetry with far higher signal-to-noise than one that relies solely on detection. Prevention makes detection work.

These three principles are interdependent. Minimization reduces the space the other two principles have to cover. Deterministic prevention removes whole attack classes from the behavioral layer and feeds a cleaner signal to what remains. Machine-speed detection closes the gap where prevention cannot reach.

The blueprint that follows is organized around making all three operate at their strongest.

The Seven Layers of Modern Defense

The blueprint below describes seven functional layers. Each layer is defined by its capabilities, not by the products that provide them. Some products span multiple layers. Some layers can be partially covered by other layers. The goal is coverage of every capability, not one product per layer.

In the blueprint above, identity pervades every layer. The traffic governance plane is the policy fulcrum, unifying API, AI inference, and agent tool traffic under a single deterministic policy model. The learning loop at the base shows behavioral detections at Layers 5 and 7 being promoted into deterministic rules at Layers 1, 3, and 4. This is how the cake is actually assembled.

Layer 1: Identity and Access Fabric

Purpose. Establish and enforce who (or what) is acting, what they are authorized to do, and under what conditions. This is the foundation on which every other layer stands.

Core Capabilities.

- Strong authentication with phishing-resistant factors

- Single sign-on with centralized session control

- Conditional access based on device posture, location, time, and behavioral signals

- Workload and machine identity (TLS, SPIFFE, service accounts)

- Non-human identity governance for agents and automated systems, including discovery, lifecycle, attribution, and revocation of agent identities at scale

- Privileged access management with just-in-time elevation

- Session anomaly detection

Prevention Mode. Primarily deterministic at the policy evaluation level. Behavioral for anomaly detection (impossible travel, session hijacking indicators).

Design Intent. Identity is the first and most consequential policy decision in any request, which is why it is Layer 1. Every downstream layer consumes identity as an input; none can operate coherently without it. An architecture that treats identity as a boolean (authenticated or not) rather than a rich policy input has ceded most of its authorization power before any other layer has a chance to act. Agents and workloads require first-class identity, not an afterthought.

Layer 2: Perimeter and Edge

Purpose. Reduce the volume and class of traffic that reaches internal systems. Establish the first boundary of trust in network terms.

Core Capabilities.

- Volumetric attack absorption (DDoS mitigation, connection rate limits)

- Protocol hygiene (TLS termination, cipher policy, protocol version enforcement)

- DNS security (filtering, DNSSEC, tunneling detection)

- Web filtering and category-based access control

- Geographic and reputation-based denial

- Secure enterprise browsing with isolation capabilities for untrusted content

Prevention Mode. Predominantly deterministic at the network level. Behavioral for threat intelligence overlays and anomaly detection.

Design Intent. Every request that can be denied here is a request that does not consume downstream capacity. This layer earns its cost through volume reduction more than sophistication. Perimeter is positioned as Layer 2 rather than Layer 1 because a modern zero trust architecture starts with identity; perimeter is a reinforcing boundary, not the primary one.

Layer 3: Traffic Governance Plane

Purpose. Enforce fine-grained policy on every request, call, and action that traverses the environment. This is the layer at which deterministic prevention produces its greatest leverage.

Core Capabilities.

- API gateway functions: schema validation, authentication enforcement, scope-based authorization, rate limiting, quota enforcement, request/response transformation

- Web application firewall with both signature and positive-model rules

- AI and inference governance: model routing, prompt policy, token budgets, output filtering, inference-layer identity

- Agent and tool invocation governance: task-based access control, tool-level authorization, transaction scoping, delegated authority flows, and Model Context Protocol enforcement as the emerging standard for agent-to-tool traffic

- Observable policy decisions with structured telemetry for every evaluated request

Prevention Mode. Overwhelmingly deterministic. This is the layer at which the deterministic prevention thesis is strongest, because the inputs (protocol semantics, identity claims, declared policy) are all structured, and the decisions are binary.

Design Intent. Three properties make this layer structurally irreplaceable. It sits between every external caller and every protected workload, which makes it the enforcement point with the broadest reach. It operates on structured protocol data, enabling deterministic prevention at very low false-positive rates. It produces the richest per-request telemetry in the stack, which means its observability output is what enables effective downstream detection.

A critical architectural requirement at this layer is heterogeneity. Modern environments carry three distinct classes of traffic: application API calls, AI inference requests, and agent tool invocations. Each has its own policy model, identity semantics, and abuse patterns. Fragmenting these into three separate governance systems produces operational drift, policy inconsistency, and gaps at the boundaries. A unified policy plane across all three is a structural requirement, not a preference.

The same heterogeneity logic applies to identity. The traffic governance plane consumes identity claims from multiple providers across the modern enterprise: workforce identity systems, customer identity systems, machine and workload identity, and increasingly agentic identity issued through standards-based protocols. A traffic plane bound to a single identity provider is a fragmentation point, not a unification point. The enforcement layer must be neutral across identity sources by construction, because the issuance of identity and the enforcement of what that identity is permitted to do are different architectural responsibilities operating on different telemetry, at different cadences, and with different failure modes. Collapsing those responsibilities into a single control plane is consolidation, not coherence.

An additional non-negotiable is memory safety in the data path. Any component that sits in every request path and is written in a memory-unsafe language is a perpetual patch-cadence problem. The vulnerability deluge anticipated by frontier AI research has now been empirically demonstrated. Recent frontier model disclosures surfaced thousands of previously unknown zero-day vulnerabilities across foundational software, including flaws that had survived decades of human review. Memory-unsafe data path components are not a feature to be patched on cadence. They are a structural liability on a short clock.

Layer 4: Application and Supply Chain Integrity

Purpose. Ensure that the code, dependencies, and artifacts running in the environment are trusted by construction, not by hope.

Core Capabilities.

- Secure software development lifecycle with enforced gates

- Software bill of materials generation and policy-based admission control

- Signed artifact enforcement at deployment

- Hardened, minimal base images with continuous vulnerability remediation

- Dependency provenance tracking

- Secrets scanning in source, builds, and images

- Static and dynamic application security testing integrated into CI/CD

- Model and dataset provenance for AI workloads

Prevention Mode. Deterministic at the policy gate level (signed or not, on SBOM allowlist or not, scan-clean or not). Behavioral in the static analysis phase.

Design Intent. The inside-out attack pattern (a compromise propagated through the supply chain, ending inside the perimeter with full trust) is the most consequential shift in the threat model over the last two years. This layer exists to make that pattern structurally harder. Every artifact that enters the environment should carry cryptographic evidence of its provenance, and admission should be contingent on policy satisfaction, not reputation.

The compounding pressure on this layer comes from downstream flow. When frontier-scale discovery surfaces thousands of vulnerabilities in foundational operating systems, browsers, and widely used open-source libraries, those findings propagate into every enterprise software bill of materials as new critical advisories. A supply chain posture built on manual review and best-effort patching cannot process that volume. The admission gate must be deterministic, and the dependency set must be actively minimized.

Layer 5: Workload Runtime Protection

Purpose. Detect and contain attacks that have bypassed preventive controls and are operating within the environment.

Core Capabilities.

- Endpoint detection and response with behavioral analytics

- Cloud workload protection (container, VM, serverless)

- Runtime network segmentation and microsegmentation

- Kernel-level telemetry (eBPF or equivalent) for syscall and process behavior

- Lateral movement detection

- Memory-level attack detection (exploitation techniques, injection, escalation)

- Container and Kubernetes-specific runtime controls

Prevention Mode. Predominantly behavioral. Some deterministic capability through policy (Pod Security, network policies, seccomp, capability dropping).

Design Intent. This is where behavioral prevention and detection do their heaviest work. The inputs are unstructured (process trees, syscall patterns, memory access, network flows). Deterministic rules exist, but cover a smaller fraction of the attack space than at Layer 3. Accepting this means funding this layer for what it does well (behavioral judgment) and not asking it to do the work that should have happened deterministically upstream.

Layer 6: Data Protection

Purpose. Protect data at rest, in transit, and in use, and prevent its unauthorized egress.

Core Capabilities.

- Encryption at rest with managed key lifecycle

- Encryption in transit with strong protocol policy

- Data classification and labeling

- Data loss prevention with channel coverage (email, web, endpoint, API)

- Secrets management with rotation and ephemeral issuance

- Database activity monitoring

- Tokenization and format-preserving encryption for sensitive fields

- Model output filtering for sensitive data exposure in AI contexts

Prevention Mode. Deterministic for encryption enforcement, access policy, and classification-based blocking. Behavioral for anomalous access patterns and egress detection.

Design Intent. Data is the ultimate objective of most attacks. This layer makes data valuable only where it should be valuable and visible only to those who should see it. Irreversibility is the key concept: a leaked key, an exfiltrated dataset, and an exposed model weight cannot be recovered. Preventive controls at this layer are therefore disproportionately valuable.

Layer 7: Security Operations and Response

Purpose. Correlate signals across layers, detect what individual layers cannot see in isolation, and respond at machine speed.

Core Capabilities.

- Centralized telemetry ingestion from every layer

- Correlation and detection engineering

- Machine learning and behavioral analytics across combined signals

- Threat intelligence integration

- Orchestration and automated response (SOAR capabilities)

- Case management and investigation tooling

- Metrics instrumentation for time to detection and time to response

- Threat hunting capabilities with full-fidelity data access

Prevention Mode. Primarily detection. Behavioral by nature of the problem space. Deterministic correlation rules exist, but are the floor, not the ceiling.

Design Intent. This layer succeeds or fails on signal quality. An architecture that feeds it noise produces analyst burnout and false confidence. An architecture that feeds it structured, policy-contextual telemetry from strong preventive layers produces detection at the speed the threat requires. AI-augmented security operations are no longer an aspirational capability. Defender-focused cyber models and agent governance platforms are now actively deployed at enterprise scale across major vendors.

That shift raises the ceiling on what this layer can do, but does not raise the floor. A behavioral analytics engine, whether classical or AI-driven, delivers value proportional to the quality of the signal it consumes. Time to detection and time to first response in single-digit minutes are the emerging standard, and that standard is only achievable when the upstream layers have done their job.

How the Layers Work Symbiotically

The seven layers are not independent. The value of the architecture lies in how it reinforces itself.

Prevention Feeds Detection

Every policy decision at Layers 1, 2, and 3 is also a telemetry event. A denied request generates a log line that enriches the SOC's understanding of attacker behavior. A granted request carries a structured context (identity, scope, policy decision) that makes Layer 7 correlation dramatically more effective. The prevention layers are not parallel to the detection layer; they are upstream tributaries feeding it.

Detection Informs Prevention

Behavioral detection at Layers 5 and 7 identifies new attack patterns that can then be encoded as deterministic rules at Layers 1 through 3. Every novel attack caught behaviorally should produce a question: can this now be prevented deterministically in the future? The architecture learns.

Identity Pervades Every Layer

The identity fabric at Layer 1 is not consulted once at login. It is the input to policy decisions at the traffic layer, the basis for workload permissions at the runtime layer, the access gate for data at Layer 6, and the pivot for correlation at Layer 7. An identity architecture that cannot service all of these consistently produces gaps at the seams. Identity is Layer 1 precisely because nothing downstream works without it.

Supply Chain Integrity Underwrites Runtime Assumptions

The behavioral detection at Layer 5 is calibrated against an assumed baseline of legitimate workload behavior. When supply chain integrity at Layer 4 is weak, the baseline is contaminated, and Layer 5 loses its ability to distinguish benign from malicious. Strong supply chain controls are not a parallel investment to runtime detection; they are its precondition.

The Traffic Governance Plane is the Policy Fulcrum

More policy decisions are made at this layer than anywhere else in the stack, because every request, call, and invocation passes through it. The quality of policy at this layer compounds. A well-governed traffic plane removes enormous volume from downstream layers, delivers structured context to the SOC, and handles entire attack classes deterministically. A poorly governed traffic plane pushes work downstream that downstream layers are not designed to handle efficiently.

Defender AI is Itself a Governed Workload

The AI-augmented SOC, the AI-driven AppSec platform, and any automated remediation agent introduced into the environment are not outside this architecture. They are subjects of it. A defender AI agent is a non-human identity at Layer 1, a traffic-generating client at Layer 3, and a software artifact with its own dependency set at Layer 4. Its training data provenance, invocation patterns, and authorized actions all produce telemetry at Layer 7. Organizations deploying defender AI without applying the same architectural controls to those agents inherit a new class of privileged insider risk. The blueprint applies to itself.

The Economic Case for This Blueprint

The case for this architecture rests on four economic realities.

1. Cost Per Event is Not Linear Across Layers

A policy denial at the traffic layer costs nothing effectively. A SOC alert costs analyst time. A contained incident costs an incident response engagement. An uncontained breach costs the full regulatory, reputational, and operational burden. Shifting events leftward in this cost curve is the single most impactful thing an architecture can do.

2. Irreversibility has Infinite Cost

Certain attack outcomes cannot be undone. Exfiltrated data, leaked keys, destructive agent actions, and poisoned model weights. For these outcomes, prevention is not a cost optimization; it is the only available defense. Architectures that under-fund prevention for outcomes in this category are accepting unbounded downside.

3. Detection has a Latency Floor

Human-in-the-loop detection cannot be compressed below certain thresholds. Automated detection can be faster, but it still operates in non-zero time. The current generation of defender-focused AI tooling closes part of that gap, but the floor does not disappear.

Adversaries with access to frontier discovery models operate below that floor by construction, because their decision cycle is set by inference cost rather than by analyst review. Deterministic prevention operates in microseconds. For the attack classes that can be expressed deterministically, prevention is the only mode that keeps pace with machine-speed adversaries, regardless of how capable the defender's own AI layer becomes.

4. Signal Quality Compounds

Strong upstream prevention produces a cleaner downstream signal. A clean signal enables faster and more accurate detection. Accurate detection justifies higher automation in response. Each layer's effectiveness multiplies through the one downstream. Weak upstream controls degrade every layer that follows.

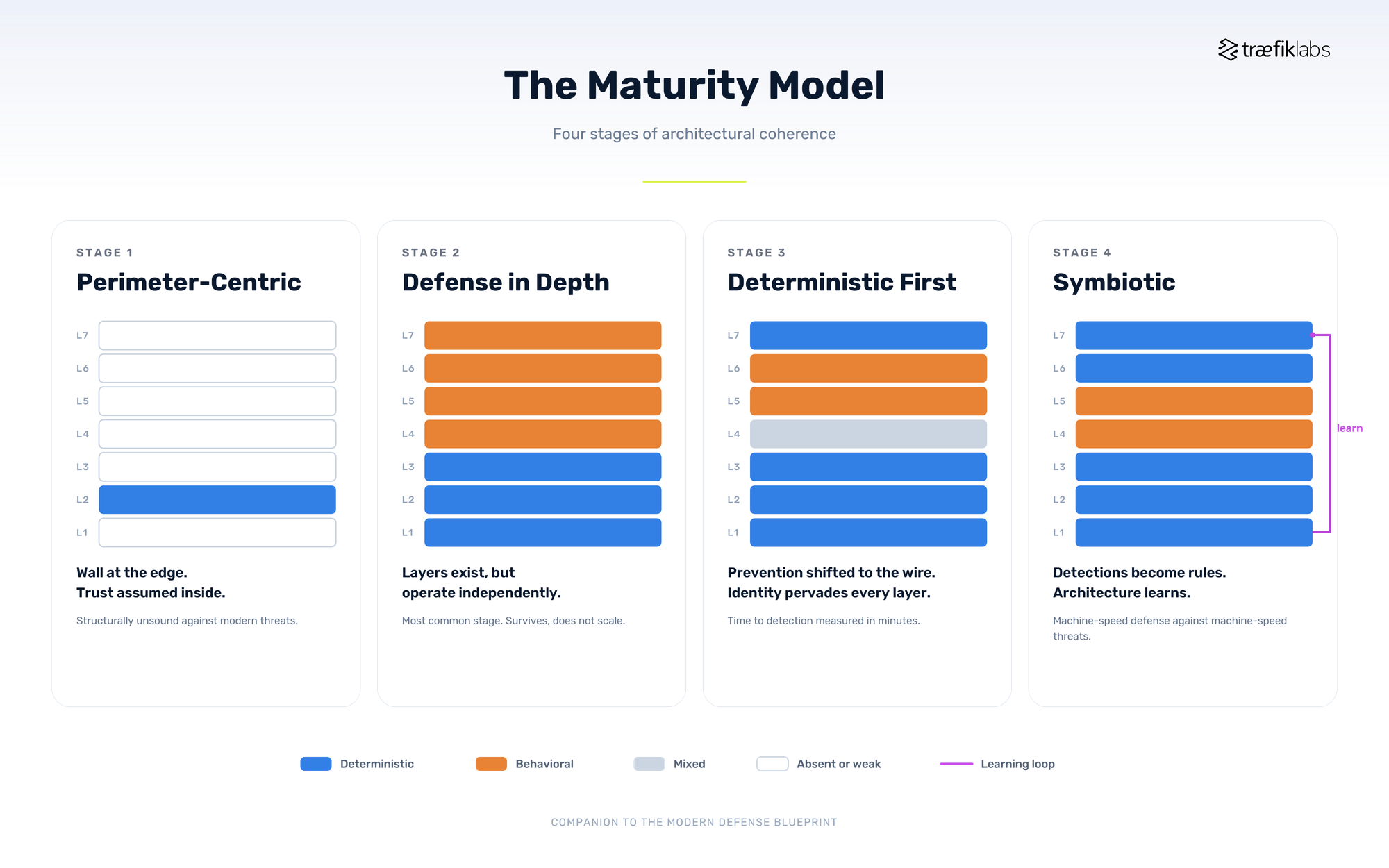

A Maturity Model for Self-Assessment

In this maturity model, there are four stages of architectural coherence. Stage 1 is a single wall with trust assumed inside. Stage 2 layers multiple defenses that operate independently. Stage 3 shifts prevention deterministically to the wire and makes identity pervasive. Stage 4 closes the loop, with behavioral detections becoming new deterministic rules on a short cadence. The goal is not Stage 4 everywhere. The goal is knowing where the next investment produces the greatest marginal improvement.

A CISO using this blueprint can assess the current state against the following progression. The goal is not to be at the highest stage everywhere; it is to understand where the organization sits and where the next investment produces the greatest marginal improvement.

Stage 1: Perimeter-Centric

Defense is concentrated at the network edge. Identity is coarse. Inside the perimeter, trust is broadly assumed. Detection is signature-based. This stage is structurally unsound against modern threat models and is increasingly rare in mature organizations.

Stage 2: Defense in Depth

Multiple layers exist but operate independently. Identity is federated but not pervasive. Detection is present but overwhelmed by volume. This is the most common stage. It survives but does not scale against frontier AI-enabled threats.

Stage 3: Deterministic First

Prevention has been deliberately shifted leftward into layers where it can operate deterministically. The traffic governance plane enforces fine-grained policy. Identity is pervasive across humans, workloads, and agents. Behavioral detection operates on a cleaner signal because routine violations are prevented upstream. Time to detection is measured in minutes.

Stage 4: Symbiotic

The layers operate as a single system. Telemetry flows consistently. Detection findings become new preventive rules on a short cadence. Supply chain integrity underwrites runtime assumptions. Identity governs every policy decision. Response is largely automated for the volume of routine events, freeing analysts for the novel and complex. Time to detection and response is measured in single-digit minutes. The organization can defend against machine-speed adversaries because its own architecture operates at machine speed.

Stage 4 is achievable. It requires discipline in capability selection, commitment to deterministic prevention wherever viable, and architectural coherence across layers. Most organizations pursuing this trajectory find that the constraint is not technology but integration quality, which is why capability coherence matters more than any individual product choice.

Implementation Sequence

In the implementation sequence above, there are five investments in priority order. Identity is the foundation. Traffic governance is the fulcrum where deterministic prevention produces its greatest leverage. Supply chain integrity closes the inside-out attack path. SOC modernization comes fourth, not first, because an AI-augmented SOC built on weak upstream layers is a fast analyst drowning in the same noise. Step five is a loop, not a terminus.

For an organization assessing where to invest next, the recommended sequence is:

First, identity (Layer 1). No downstream layer works well if identity is incoherent. Pervasive, strong, conditional identity for humans, workloads, and agents is the prerequisite for everything else. The numbering of this blueprint places identity at Layer 1 for precisely this reason: it is the foundation on which all other investment depends.

Second, the traffic governance plane (Layer 3). This is where the largest volume of deterministic prevention happens and where the richest detection telemetry is produced. Unifying API, AI, and agent traffic governance under a single policy model is a strategic investment with compounding returns. Perimeter (Layer 2) is typically mature in existing organizations, which is why new investment is usually better directed at Layer 3.

Third, supply chain integrity (Layer 4). The inside-out attack pattern is the fastest-growing threat class. Investment here produces disproportionate risk reduction because it closes entire attack paths structurally.

Fourth, SOC modernization (Layer 7). An AI-driven SOC operating on the cleaner signal produced by the first three investments reaches its potential. This is a live purchasing decision for most CISOs today, because defender AI platforms are being actively marketed to SOC teams right now. The sequence matters. An AI-augmented SOC built on top of weak identity, a fragmented traffic governance plane, and a porous supply chain produces a very fast analyst drowning in the same noise. Upstream first, then SOC, in that order.

Fifth, continuous refinement of all layers. The architecture is never complete. New detection findings become new preventive rules. New threat intelligence updates every layer. Maturity is a direction, not a destination.

Closing Principle

The shift to frontier AI threat models rewards architectural coherence over point-product sophistication. An organization with a coherent seven-layer architecture operating deterministically where possible, and behaviorally where necessary, with clean signal flow between layers, will outperform one with superior individual products and weaker integration every time.

Coherence is not the same as consolidation. The layers are deliberately distinct because they perform different functions on different inputs at different cadences, and collapsing them into a single product surface erodes the structural defense in depth that makes the architecture work. The system that issues credentials should not be the only system enforcing what those credentials can do. The system that hosts a workload should not be the only system observing it. Architectural coherence is achieved through clean interfaces between distinct layers, not through bundling those layers into a single control plane.

The measure of a modern defense is not how many tools it contains. It is how small its attack surface has become, how quickly it detects what did get through, and how little human intervention either of those requires. That is the standard the frontier AI era imposes on defenders. This blueprint is how to meet it.