Differentiating the Control, Data, and Worker Planes in Kubernetes

Understanding the differences between the control plane and the data plane, and how they interact, is important for success in cloud networking. These concepts evolved over multiple decades in response to technological advancements and the needs of network administrators to scale applications and streamline deployments.

This article explores how the control plane and the data plane operate in software-defined networking, and more specifically how they (and the worker plane) fit into Kubernetes architectures.

What is Software-Defined Networking (SDN)?

Any single component of a system has limits to its size and reach, and if it wishes to grow beyond those limits, it needs a way to interact with other components, near and far. For computers, this happens through networking. Whether you’re sharing a printer between computers in your house or connecting a data center to the Internet, it’s through networking that a system grows and delivers additional value to its users.

Networks of any size come with a host of challenges. Early networks wired computers together in a ring and passed communication packets between them in series. This evolved into the Ethernet, which used a hub and spoke model to improve performance. This grew from hubs, which used a broadcast model, to switches, which use a directed model for forwarding packets. As the technology evolved, the speed of the network interfaces evolved with it. It’s not uncommon for today’s home computers to have gigabit interfaces and for large servers to have interfaces capable of multi-gigabit speeds.

In the early 2000’s, advances in network virtualization and programmability led to the development of an innovative approach to networking architecture called software-defined networking (SDN). SDN abstracts and virtualizes a computer’s network into multiple separate dimensions or “planes” — e.g., the control plane and the data plane — that you can imagine are stacked on top of one another.

Each plane carries a portion of the responsibility for routing and delivering packets that pass through a device and, ultimately, helps traffic pass through the network in an efficient and orderly fashion.

Solutions like Open vSwitch and Quagga allow an operator to run networking “hardware” entirely in software, either in a hypervisor like VMware or Proxmox, or in commodity, off-the-shelf hardware.

With vendor-specific solutions from companies like Cisco and Juniper, SDN moves the planes into software and lets a network administrator configure fleets of machines through a UI instead of visiting each switch or router to make changes.

Software-defined networking can also decouple the hardware from the capabilities of the hardware, making it possible to perform upgrades faster and at a lower cost.

The control plane vs. the data plane

The control plane sits above the data plane and makes decisions for the system. It defines the rules and policies for how a network handles packets. Which packets travel across the network, and which are rejected? Where do they travel to? Which path do they take? The control plane answers these questions.

It has other responsibilities, such as creating routing tables, the internal map where routers store and access network paths. It also builds a map of the network topology, which is the arrangement of the elements of a communication network.

The control plane acts as the brain of a network, solving problems as they arise.

The data plane, in contrast, (sometimes also called the forwarding plane) implements the decisions made by the control plane. It handles the packets themselves.

If the control plane is the brain, the data plane is both the memory and the hands of a network. It implements the decisions of the control plane and stores data about the network.

Software-defined networking in Kubernetes

Kubernetes relies on networking constructs to create a distributed system, in which multiple computers contribute to a pool of resources (CPU, RAM, and storage) where applications run. Kubernetes also utilizes the concept of planes but with different roles than the networking model.

Kubernetes traditionally recognizes three planes: the control plane, the data plane, and the worker plane.

The Kubernetes control plane

The Kubernetes control plane consists of the API server, the scheduler, the controller manager, and for some clusters, the cloud controller manager. These collectively act as the brain of a Kubernetes cluster, making decisions for all networking that happens within the cluster. It processes API requests, converts those requests into instructions that will change the state of the cluster, schedules workloads, and communicates with cloud providers to create external resources.

The Kubernetes data plane

The Kubernetes data plane is the datastore for a Kubernetes cluster. A traditional Kubernetes cluster uses etcd for its data plane, but modern clusters like K3s can use etcd, SQLite, or an RDBMS like MySQL, MariaDB, or Postgresql.

The control plane and the data plane can be colocated, or they can be separate. For fault tolerance, a cluster should have a minimum of two control plane nodes and three, five, or seven data plane nodes.

The Kubernetes worker plane

The worker plane is where Kubernetes workloads run. Worker nodes will run the kubelet, kube-proxy (or a similar component), and a container runtime such as containerd, CRI-O, or Docker. These components receive instructions from the control plane, create or destroy containers, and manage the network rules that enable communication between containers in the cluster and requests from outside of the cluster.

Software-defined networking in multi-cluster Kubernetes

Software-defined networking in Kubernetes becomes even more complicated when you have to network not one but two or even a hundred clusters. Multi-cluster Kubernetes is a relatively new construct, and it requires operators to route traffic between clusters — usually by installing a service mesh or using a solution that operates as part of the container network interface (CNI), such as Cilium’s Cluster Mesh. Other solutions, like Submariner and kilo, build a VPN-like tunnel between clusters and route traffic between them.

Whether it’s a service mesh or an inter-cluster VPN, these solutions increase the complexity of the Kubernetes clusters in which they run, and they use computing resources that workloads could otherwise use. They also require that all clusters be directly connected to public IP addresses and receive inbound communication on ports that network policies or firewall rules might otherwise block. They can become a single point of failure (SPOF) because all traffic enters through one cluster before being routed to another.

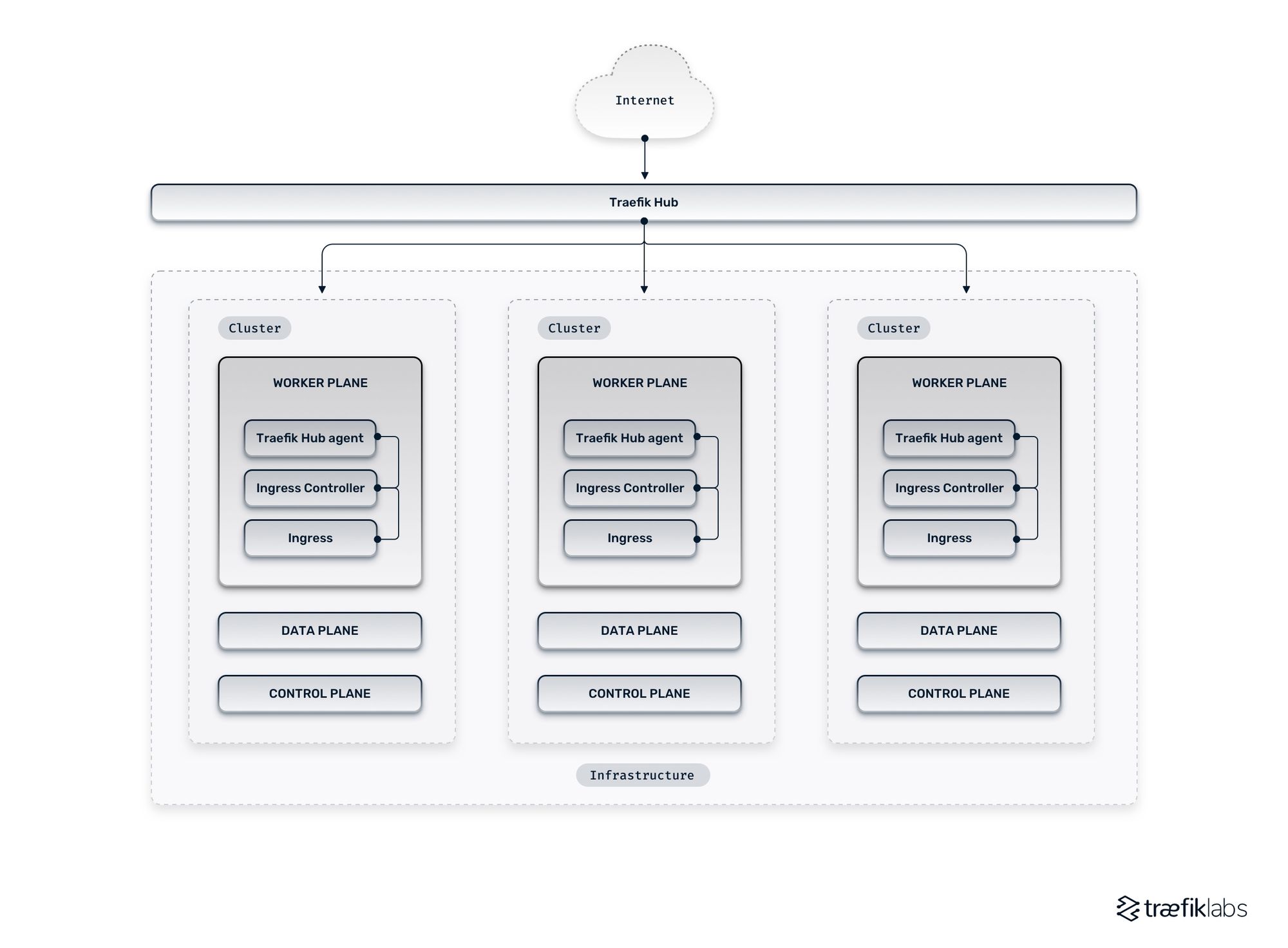

An alternative to a service or inter-cluster VPN is a networking platform like Traefik Hub that works within the control plane. It expands the capabilities of the ingress controller (a software component like Traefik Proxy, HAProxy, or NGinx) that lives within the control plane and receives requests from outside a cluster and routes them to applications running inside a cluster. Traefik Hub grants ingress controllers visibility into the resources of other clusters.

Traefik Hub is a cloud native networking platform that helps publish and secure containers at the edge instantly. It provides a gateway to your services running on Kubernetes or other orchestrators. It operates as a platform that sits outside of your clusters and connects with agents attached individually to the ingress controller of each cluster. It makes software-defined networking possible in multi-cluster Kubernetes architectures.