Traefik Hub in a World of GitOps

Automation is the keyword that brought me into IT in the first place. What is more appealing than thinking (hard) once about how to receive the perfect execution of a task any time you want, with guaranteed success and with no errors or effort. Knowing you will succeed when engaging in a complex process is a spoiler I can live with.

Coding is our way of telling machines to do the work for us. Despite being stupid (at least for now), machines never (ever) fail at their job. If they fail, it’s because we, intelligent humans, have failed at telling them what to do (not my fault, it’s the bug’s fault … moreover, it works on my laptop).

But the problem with coding is that its influence is limited to the virtual world: Even though you’re staring hard at a concrete RJ45 cable, no Jedi force will ever lift it in the air to plug it into your hub and connect it to your LAN network. It does not (unfortunately) happen.

So for a long time, coding was limited to software, and everything hardware still required lots of manual operations, by design, prone to human error.

Then virtualization, then the cloud, and then the magic.

All of a sudden, with virtualization, containers, and orchestrators, we unlocked all limitations from the physical world … (for The Matrix fans: “You think that’s air you’re breathing now?”). Because if no Hussain Bolt will ever run through your data center corridors to connect and disconnect cables all day long, having a computer virtually connecting cables 10 times per second 365 days a year belongs in the realm of possibilities. It became possible to release a hundred times per day, scale infrastructures to different regions in minutes, and spawn a new cluster by pressing a (virtual) button (yes, using a physical keyboard).

The best news when virtualization happened was that software was a mature world: versioning, testing, CIs, and CDs were commonplace, and translating these benefits to infrastructure management was just a matter of time. We saw movements like DevOps and GitOps gain popularity to become mainstream eventually.

If it requires a click, it will make you sick

Code is at the center of almost everything today, whether from source code files or API calls. To thrive in this new world of automation, you need tools that play along and can be configured through code or API calls. Your tech stack must adopt a headless, programmatic approach for you to reap all the benefits of GitOps.

So when designing Traefik Hub, not only did we decide to provide a UI to ease and document your first experiences, but we of course designed the product around CRDs and API calls so it can be part of your GitOps processes, whether for installation, configuration, or operation to publish and secure containers at the edge.

So let’s see how you can spawn tunnels and protect ingresses from configuration files (no clicks involved).

Wait, what is Traefik Hub?

Traefik Hub is a cloud native networking platform that offers a gateway into your containers. It helps you publish and secure services in a matter of minutes and with little to no experience in cloud native networking. It was built with simplicity in mind so that developers can collaborate at scale. In a nutshell, it allows you to achieve two main things:

1) Publish any container in Docker or Kubernetes over the internet, from any network, and from any machine (including your laptop). New and existing services can be accessed from anywhere and without complex network configurations.

2) Add a layer of role-based access control to your existing ingresses, whether your ingress controller is Traefik or Nginx. It is an integrated and automated solution.

And please, expect more, way more. Traefik Hub only reached general availability in October, and we’re just starting to build the product. We have many ideas for how things can progress and cannot wait to see what future iterations will invite.

But let’s get back on track and start off with our first use case/example.

Publishing a Service

For this example, I suppose you have already created an account and installed your agent (it’s as difficult as a copy/paste and soon will also be GitOps compliant).

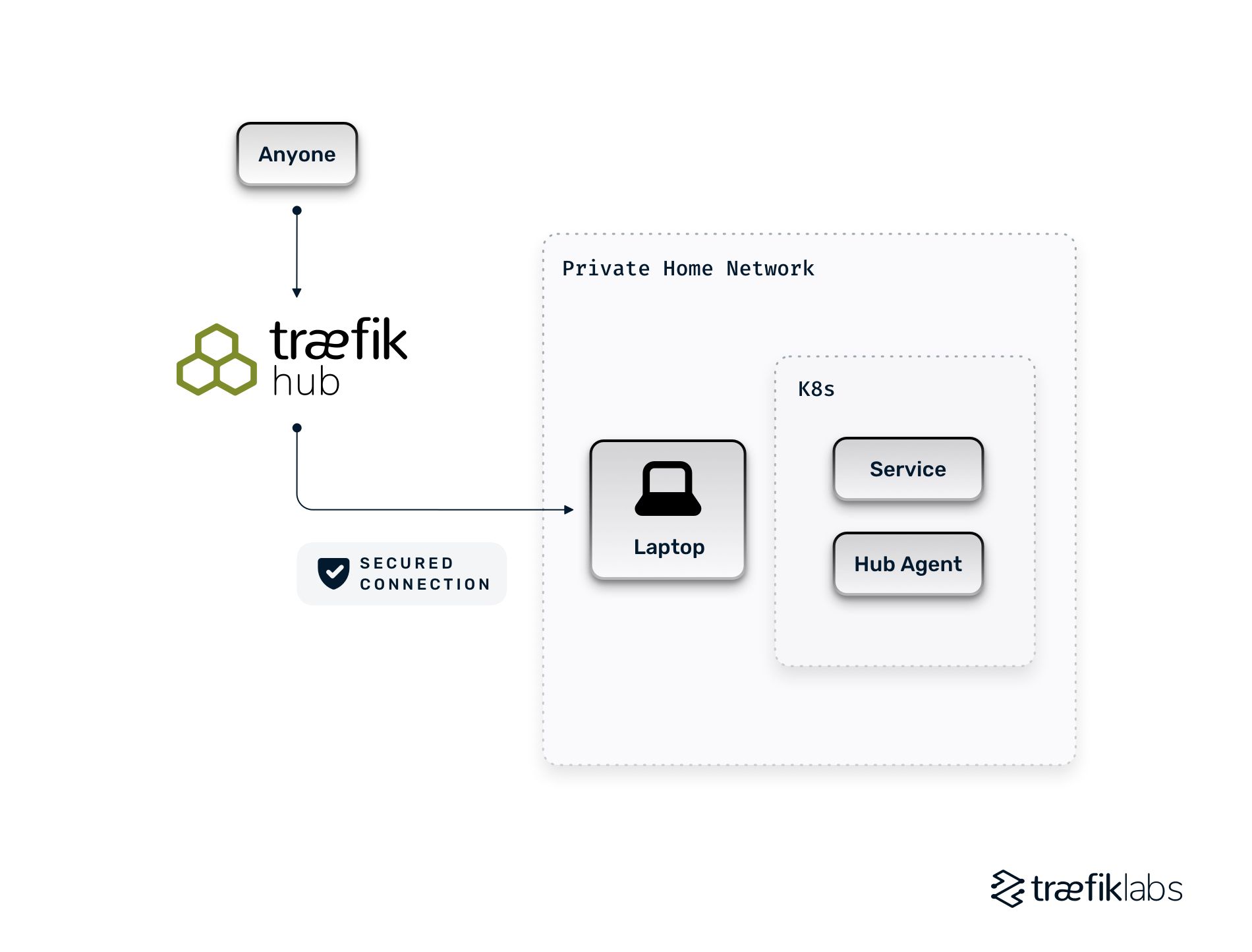

Our scenario is the following: I have a laptop on my private network at home with a K8s cluster running. I want friends to access a newly deployed service on that cluster, but there is currently no way for people to reach anything I’ve deployed there. With Hub, it is a CRD away (or a click away if you insist on using the UI).

In our terminal, we’ll create the following CRD that asks Hub to create an ingress on the edge (on the Hub Platform), pointing to the service my-new-service.

apiVersion: hub.traefik.io/v1alpha1

kind: EdgeIngress

metadata:

name: my-first-publication

spec:

service:

name: my-new-service

port: 80

And we’ll just apply the CRD or let our CI/CD do the magic.

kubectl apply -f basic-edge-ingress.yml

And, that’s really it, the service is now accessible to anyone with the URL, including people outside your network.

Your next question will probably be, “but where is my service?” The answer is simple. When you don’t specify a URL for your service, Hub automatically creates a random DNS for you, which will look like `xxx.traefikhub.io`. And if you’re wondering how it can be accessed from outside your network, it’s because Hub (the agent) creates a tunnel between the platform and itself.

But what if I want my service to be accessible through one of my domains? The good news is that Traefik Hub also supports custom domains, but to keep this introduction short, I’ll let you dive into the documentation to see how.

Adding Access Control to an Ingress

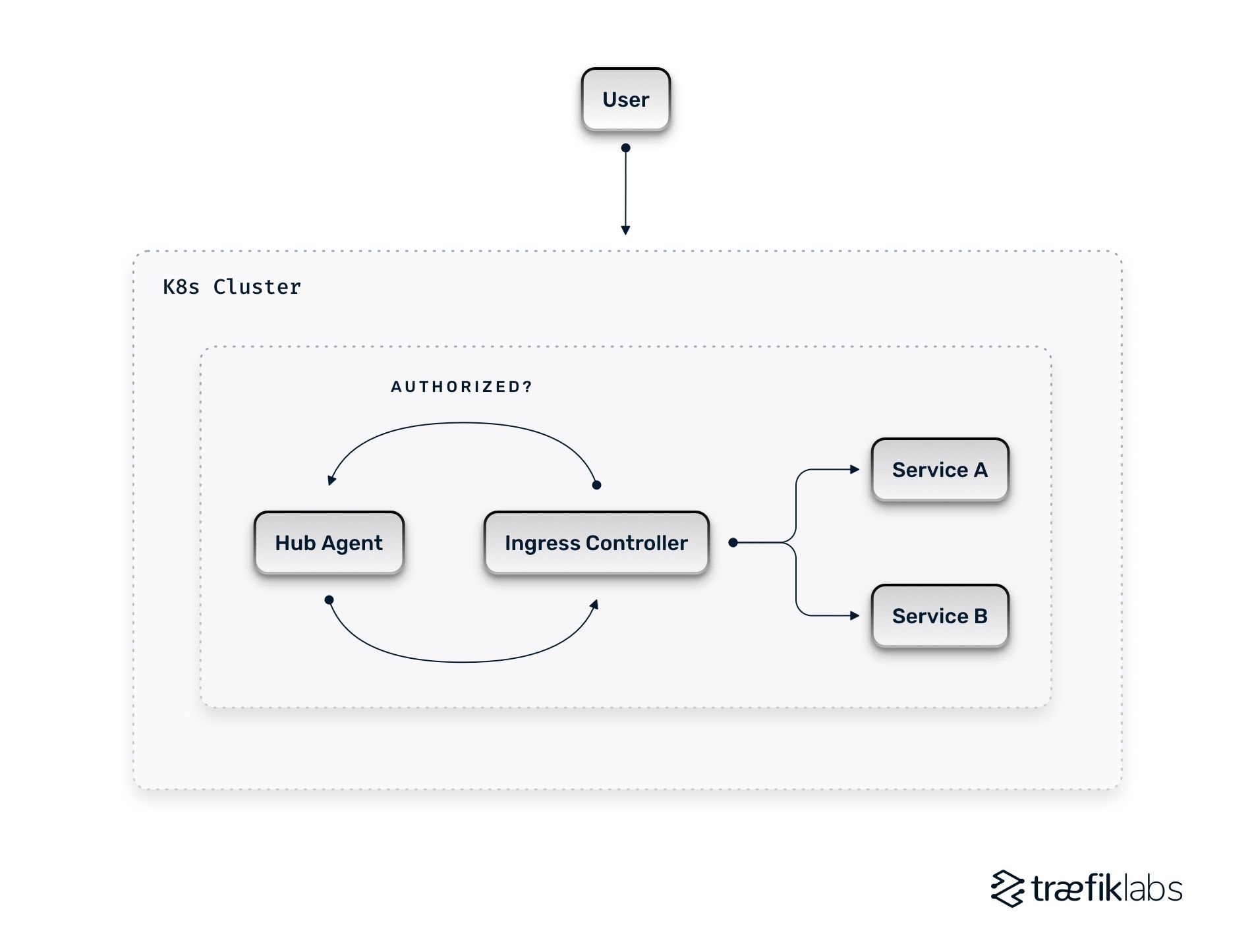

For this second example, let’s suppose you are running an existing cluster that is already configured with an ingress controller (Traefik Proxy or Nginx) and several services. Like before, I suppose you already created an account on Hub and installed the Hub agent.

In our scenario, one K8S cluster is accessible from the cloud at work, and I want to restrict access to some services so that only partners can consume them.

Declaring an access control policy

Traefik Hub uses Access Control Policies to define rules that allow or deny access to resources. For now, you can use BasicAuth, OIDC (with a nice helper to use Google Accounts for OIDC), or JWT.

Here, to keep things simple, let’s define a policy requiring a login/password through BasicAuth.

First, we need to hash the password and create our user “username” with the password “password” (not secure, I agree, please do better when creating your users).

htpasswd -Bbn username password

username:$2y$05$y8t8jU7CeZlKimCrNGfzJu3sUygiONONvksETRyfMVbQ.VVCbQMVG

Then, let’s declare the Access Control Policy that we’ll call `my-basic-auth`.

apiVersion: hub.traefik.io/v1alpha1

kind: AccessControlPolicy

metadata:

name: my-basic-auth

spec:

basicAuth:

users:

# Credentials: username password

- username:$2y$05$y8t8jU7CeZlKimCrNGfzJu3sUygiONONvksETRyfMVbQ.VVCbQMVG

And apply the CRD or let our CI/CD do the magic.

kubectl apply -f my-basic-auth.yml

Protecting the Ingress

Now that we have an Access Control Policy, we can use it everywhere we want to protect any existing (or new) ingress.

How? By adding an annotation to the ingress itself, pointing to the ACP.

metadata:

annotations:

hub.traefik.io/access-control-policy: my-basic-auth

This is it; once the annotation is added to the Ingress and applied, Hub enforces the policy before accessing the underlying service.

What’s Next?

At Traefik Labs, we strive to develop products that are appealing to use and that work so well that sometimes you forget they even exist. In a GitOps environment, it’s even more true because you barely get to see the UI. After all, the one clicking is your CI/CD … not you.

Interested in learning more?

Watch our recent webinar with Weaveworks to learn how to secure your application deployments with tunnels, OIDC, RBAC, and progressive delivery using Weave GitOps and Traefik Hub.